(Updated 7/27/2010 – 11 am EST – added info on power and tower options)

When you think about blade servers, you probably think, “they are too expensive.” When you think about doing a VMware project, you probably think, “my servers are too old” or “I can’t afford new servers.” For $8 per GB, you can have blade servers preloaded with VMware ESXi 4.1 AND 4TB of storage! Want to know how? Keep reading.

No, I’m not smoking something. I’ve done the configuration and I can show you how to achieve a 4 TB SAN and 3 ESX hosts on blade servers with the IBM BladeCenter S. Before I can explain what I’ve done, let me give you the basics of the IBM BladeCenter S.

Overview of the IBM BladeCenter S

Overview of the IBM BladeCenter S

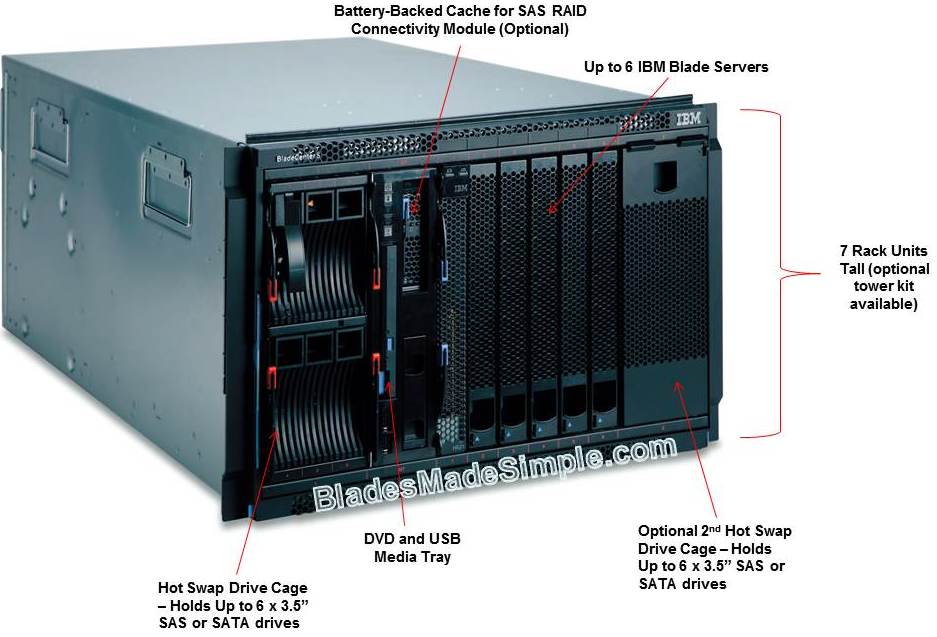

At 7U high, the chassis of the IBM BladeCenter S is the same height as the original IBM BladeCenter (now called the BladeCenter E). The chassis uses the same blade servers as the rest of the IBM blade chassis family, but the chassis holds only 6 blade servers – primarily due to the addition of locally attached storage drives. In addition, the chassis has the option to add a DVD drive for access to local media.

The IBM BladeCenter S has the ability to host up to 12 drives via Disk Storage Modules (IBM part # 43W3581) located to the right and left of the blade servers. These modules allow for each server have access, either dedicated or SHARED. Each DSM holds 6 x 3.5″ SAS, Near-Line SAS or SATA drives with drive sizes ranging up to 2TB. It is important to note, though, with the blade servers using 2.5″ hot-swap drives you may find yourself needing to stock two different types of drives. The DSM’s are sold separately, so if you only need to use 4 drives, you can wait and invest the additional $795 (U.S. List) at a later date, when you need the additional drive capacity.

As mentioned above, the blade servers can have either dedicated or shared access to the drives located in the DSM. The type of access depends on the type of SAS module used in the chassis. IBM offers both a SAS Connectivity Module and a SAS RAID Controller Module. The SAS Connectivity Module (IBM part # 39Y9195) is a module provides the ability to match up a blade server with the local drives. For example, if you have 6 drives and 2 blade servers, the SAS Connectivity Module would give the ability to map 3 drives to each blade server. The key here is that this is dedicated access – like having direct attached storage for each blade server. Each blade server needing access also need a SAS Connectivity Card. The SAS Connectivity Module also has 4 external SAS ports that can enable you to access IBM EXP3000 storage arrays providing additional storage capacity per blade server. This requires the blade servers to have the ServeRAID-MR10ie card installed instead of the SAS Connectivity Card and only one EXP3000 is allowed per blade server, however this is a great way to expand your storage if you outgrow the capabilities of the Disk Storage Modules.

In contrast, the SAS RAID Controller Module (IBM part #43W3584) allows for you to pool the storage and offer access to these arrays to each blade server that has the SAS Connectivity Card installed. Volumes that are created can be assigned to a specific blade or shared by several blade servers. The IBM SAS RAID Controller Module supports RAID levels 0, 1, 5 and 10 and each module also comes with RAID battery backup module. There are some caveats to be aware of: only SAS or NL SAS drives are supported (no SATA); the maximum volume size is currently limited to 2TB and the maximum number volumes each blade server can have is 8 (for a total of 48 volumes per chassis.) Another important thing to take note is that you must have 2 x SAS RAID Controller Modules, which sit in I/O Bays #3 and #4. This provides a redundant connection for each blade server with the SAS Connectivity Card. In fact, since I brought it up, let’s take a closer look at how the modules work in the IBM BladeCenter S.

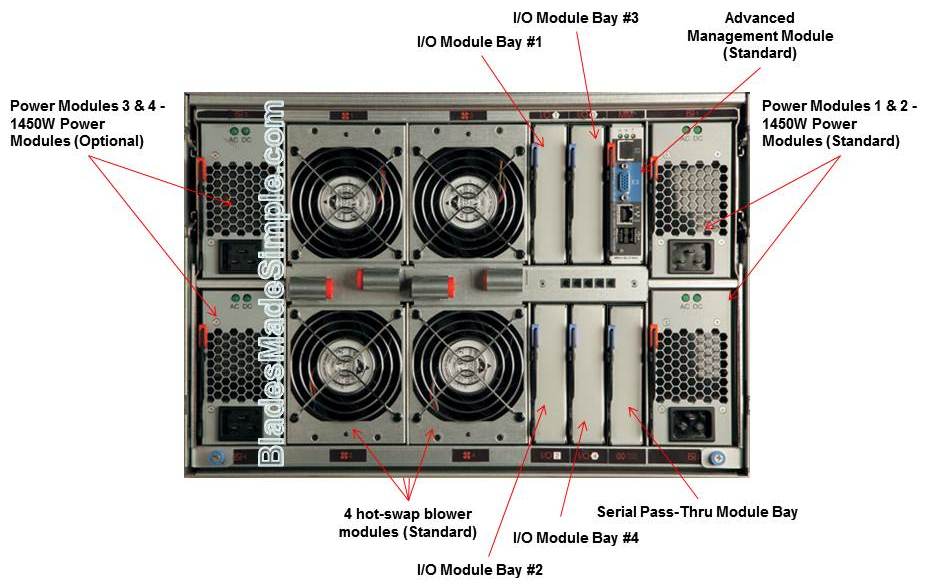

BladeCenter S a Look From BehindWhen you look at the back of the IBM BladeCenter S, it may look confusing, but don’t worry – it’s pretty straight forward. The chassis comes standard with 2 x 1450w power supplies standard and a single Advanced Management Module. If you are using high wattage blade servers or using the second DSM, you probably will need the 2nd set of power supplies (IBM part # 46C7438). If your budget is tight and you can’t afford to pony up the additional $599 U.S. list I recommend you take advantage of IBM’s Power Calculator prior to purchase to see if you need the 2nd set of power supplies. Following the design of the other IBM BladeCenter chassis, the IBM BladeCenter is cooled by a set of 4 redundant hot-swap blower modules. Don’t bother looking for any other fans or cooling devices, because you won’t find them. These four blowers cool the entire chassis, modules and blade servers.

BladeCenter S a Look From BehindWhen you look at the back of the IBM BladeCenter S, it may look confusing, but don’t worry – it’s pretty straight forward. The chassis comes standard with 2 x 1450w power supplies standard and a single Advanced Management Module. If you are using high wattage blade servers or using the second DSM, you probably will need the 2nd set of power supplies (IBM part # 46C7438). If your budget is tight and you can’t afford to pony up the additional $599 U.S. list I recommend you take advantage of IBM’s Power Calculator prior to purchase to see if you need the 2nd set of power supplies. Following the design of the other IBM BladeCenter chassis, the IBM BladeCenter is cooled by a set of 4 redundant hot-swap blower modules. Don’t bother looking for any other fans or cooling devices, because you won’t find them. These four blowers cool the entire chassis, modules and blade servers.

Management

The Advanced Management Module (AMM) is the device that provides you with LOCAL keyboard, video and mouse connectivity (although only USB for keyboard and mouse) as well as an ethernet port to connect into your management network. The AMM gives you the ability to manage / monitor all of the chassis’ thermals as well as remotely control the blade servers and the I/O modules. In all honesty, the AMM is feature rich, so if you want to take a peek at what it can do, take at look at this IBM BladeCenter S Advanced Management Module simulator. Unlike the other IBM blade chassis, there is not an option for a redundant AMM, however in the event of a failure your blade servers, I/O modules, fans and blowers will continue to function without penalty.

I/O Architecture

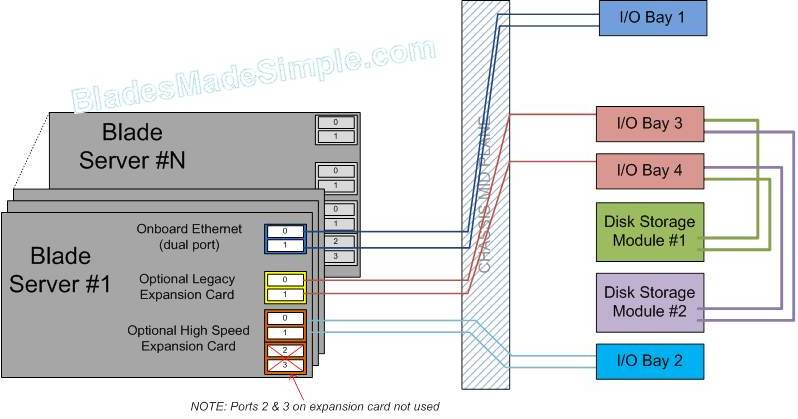

The biggest technical confusion I see from engineers and customers alike is around the I/O layout of the blade chassis. The IBM BladeCenter S is a bit dissimilar to the other IBM chassis in the BladeCenter family so let me explain how it works. There are 4 I/O bays in the IBM BladeCenter S. The 1st I/O bay maps to the NICs that come on the motherboard of each blade server. If you are familiar with rack-mount servers, you know they typically have 2 x 1Gb Ethernet ports. The IBM Blade servers are no different – they also have 2 x 1Gb Ethernet NICs. In order for them to be “lit up” you need to have a module in bay 1 that can allow the signal from the blade server to extend out of the chassis. To simplify things – think of having a power outlet in the wall at home and connecting an extension cord to it so you can turn on a light that is a few feet away. The same rudimentary concept is how it works in the blade infrastructure. The only difference is, with the IBM BladeCenter S, both NIC ports 0 and 1 go to the I/O Module Bay #1. This means if that module has an issue, then those 2 NICs located on the motherboard of each blade server will be dead. There is no redundancy with the onboard NICs in the IBM BladeCenter S (unlike the other IBM BladeCenter chassis.) Why did IBM design it this way? Well, the original target market for the IBM BladeCenter S was small businesses and remote offices. When you look at those environments – how many have redundant NICs for the rack / tower server NICs? Odds are none. With that in mind, IBM designed the BladeCenter S to only have a single I/O module for both onboard NICs. Never fear, though. After a few months, IBM revised the design to allow for I/O Module #2 to provide an additional 2 NICs, using the 2/4 port Ethernet adapter (IBM part # 44W4479) on each blade server. The card is designed to provide 4 Ethernet Ports, however with the BladeCenter S, only 2 ports are connected. Therefore with network modules in I/O Module Bay 1 and 2 you can get 4 NICs. Add this to the 2 x SAS storage cards that we mentioned in the sections above and you “should” have adequate architecture to provide a VMware environment.

The biggest technical confusion I see from engineers and customers alike is around the I/O layout of the blade chassis. The IBM BladeCenter S is a bit dissimilar to the other IBM chassis in the BladeCenter family so let me explain how it works. There are 4 I/O bays in the IBM BladeCenter S. The 1st I/O bay maps to the NICs that come on the motherboard of each blade server. If you are familiar with rack-mount servers, you know they typically have 2 x 1Gb Ethernet ports. The IBM Blade servers are no different – they also have 2 x 1Gb Ethernet NICs. In order for them to be “lit up” you need to have a module in bay 1 that can allow the signal from the blade server to extend out of the chassis. To simplify things – think of having a power outlet in the wall at home and connecting an extension cord to it so you can turn on a light that is a few feet away. The same rudimentary concept is how it works in the blade infrastructure. The only difference is, with the IBM BladeCenter S, both NIC ports 0 and 1 go to the I/O Module Bay #1. This means if that module has an issue, then those 2 NICs located on the motherboard of each blade server will be dead. There is no redundancy with the onboard NICs in the IBM BladeCenter S (unlike the other IBM BladeCenter chassis.) Why did IBM design it this way? Well, the original target market for the IBM BladeCenter S was small businesses and remote offices. When you look at those environments – how many have redundant NICs for the rack / tower server NICs? Odds are none. With that in mind, IBM designed the BladeCenter S to only have a single I/O module for both onboard NICs. Never fear, though. After a few months, IBM revised the design to allow for I/O Module #2 to provide an additional 2 NICs, using the 2/4 port Ethernet adapter (IBM part # 44W4479) on each blade server. The card is designed to provide 4 Ethernet Ports, however with the BladeCenter S, only 2 ports are connected. Therefore with network modules in I/O Module Bay 1 and 2 you can get 4 NICs. Add this to the 2 x SAS storage cards that we mentioned in the sections above and you “should” have adequate architecture to provide a VMware environment.

The $32,000 Design

Now that I’ve spent a few moments telling you what the IBM BladeCenter S is all about, perhaps you understand the potential. So how did I get to the $32,000 design that enables you to have 4 TB and 3 ESX hosts? I won’t devulge in the actual bill of materials, but here’s what I came up with:

- 1 x IBM BladeCenter S chassis

- 1 x Disk Storage Module

- 4 x 1 TB Near Line Storage Disk Drives

- 1 set of 1450W Power Supplies

- 2 x Server Connectivity (Ethernet) Modules

- 2 x SAS RAID Connectivity Modules

- 1 x DVD

- 3 x HS22 blade servers – each with 2 x Intel E5620 Xeon Processors, 24GB RAM, SAS Connectivity Card, ESXi 4.1 USB Memory Key

Total U.S. List Price (as of 7/26/2010): $30,768.00

Yes, I also know that HP has an offering (the BladeSystem C3000) that could compete to this design – however IBM is the only blade server vendor that offers dedicated local disk storage. HP’s design takes up a blade server slot. Perhaps I’ll write up something on this in the future.

THIS SECTION ADDED ON 7/27/2010 – I’ve added this section to cover a couple of pieces that I left off in the original post.

Power

A really valuable feature of the IBM BladeCenter S is the ability to run on 110 v or 220 v. Use of 110 v is ideal for remote or small offices. The BladeCenter S power supplies are auto-sensing so you can use the same power supplies. There are a few power categories to choose from with the BladeCenter S:

- Redundant AC Power Source – in this policy, the power limit is set to equal the capacity of N power modules. According to IBM’s Implementing the IBM

BladeCenter S Chassis Redbook, this policy is the most conservative approach and is recommended when all four power modules are installed. When the chassis is correctly wired with dual AC power sources, one AC power source can fail without affecting your blade server operation. - Redundant Power Module Policy – in this policy, the power limit equals the capacity of one less than the number of power modules installed (more than one power module must be present). One power module can fail without affecting blade server operation. If a single power module fails, all the blade servers that are powered on will continue to operate at normal performance levels.

- No Redundancy – all power modules are used, there is no redundancy and if you lose a power supply and the power demands exceed the capacity of the available power modules, the chassis will power down… Not recommended.

Office Enablement Kit

For those environments where a standard server rack is not ideal, IBM offers the Office Enablement Kit (part # 201886X). This adds an additional few hundred dollars, but it gives you an 11u rack enclosure, complete with front and rear locking doors and wheels. It also comes with an acoustic attenuation module that helps muffle the sound. (YouTube video on this can be seen here.) As mentioned above, the IBM BladeCenter S is only 7U tall, so the additional 4U can be used for an optional Flat Panel Monitor kit (shown in the image to the left) or perhaps additional storage or networking. This kit really helps to finalize an “all-in-one” solution for small or remote environments.

For those environments where a standard server rack is not ideal, IBM offers the Office Enablement Kit (part # 201886X). This adds an additional few hundred dollars, but it gives you an 11u rack enclosure, complete with front and rear locking doors and wheels. It also comes with an acoustic attenuation module that helps muffle the sound. (YouTube video on this can be seen here.) As mentioned above, the IBM BladeCenter S is only 7U tall, so the additional 4U can be used for an optional Flat Panel Monitor kit (shown in the image to the left) or perhaps additional storage or networking. This kit really helps to finalize an “all-in-one” solution for small or remote environments.

Yes, I know it’s only 4 x 1TB drives, and I know it’s Near-Line SAS drives, however it is enough resources to help YOU create that VMware infrastructure that you need. Of course, the VMware licensing will be extra, but I just saved you a ton of money – now you can afford it…

So – what do you think? Is this appealing, or is this just a pipe dream? Let me know your thoughts. I’m really interested in getting an idea of whether this design would really work in your world.

lakeland high school

maryland board of physicians

c lo green

brown hair color

hodgkin s disease

Pingback: Kevin Houston

Kevin,

Nice little blade setup but still significantly more than 3 standard servers:

Dell R710 2xE5620 24GB x3 $3000 ea $9000

Dell MD3200 iSCSI array w/8-500GB drives $11,500

Dell 5424 Managed gigabit switch x4 $770 ea 3080

Total under $24,000, redundant net and iSCSI connections (r710 has 4 LOM NICs) and includes some of the network switching.

It will take up a bit more space, power and KVM resources but 8 500GB drives will be faster than 4 1TB.

– Howard

I would rather stick with second hand (ebay.de) filled BladeChassis H with 14 x LS21 blades (2 x dualcore 2.4, 4GB, InfiniBand HCA) + 4x2900W PSU + 2xInfiniBand Switches + 1x Fibre switch for about total 3000Eur and add some real SAN to it. For 600Eur I could add extra 4GB RAM pre blade or ~4000Eur for 16GB per blade.

Hey Kevin, it'll also be a lot less storage once you're done with the RAID setup, too. RAID10 might be a good way to go with those slow disks, but then you're down to 2 TB.

It comes down to sizing — if people can keep their workloads in RAM and not doing a lot of I/O then 4 7200 RPM spindles might work.

Pingback: Tweets that mention Blades Made Simple » Blog Archive » 4TB SAN, 3 ESX Hosts for only $32,000? YES, It’s Real! -- Topsy.com

Pingback: Corus360

Pingback: Kevin Houston

Pingback: Gereon Vey

Good point, but the #ibm BladeCenter S I designed for the article was simply to show how you can easily create a VMware farm using blade servers. The IBM BladeCenter S is perfect for those small environments looking to virtualize but not get into a ton of drives. Keep in mind I only used 4 of the 12 disk bays, so you could easily add another 1TB or 2 with minimal investment. Thanks for the comment, Bob – great to hear from you!

You bring up a good point – the #ibm BladeCenter S doesn't have Infiniband options, so if that's a requirement, you'll need to go with the BladeCenter H. The LS21's would work in the BladeCenter S though. Thanks for the reading the post and for the comment!

Thanks for the comment, Howard. The #IBM BladeCenter S is not as robust as standalone solutions, however when you need to add a 4th, 5th or 6th server, there is no impact to rack space like in a standalone solution. If you need to add additional storage, there may be in impact in rack space there too. I'm not saying the IBM BladeCenter S is for every customer, but there is a good fit for those environments running out of rack space, living out of a telco closet or those users who can virtualize down to a handful of servers. Thanks for reading this post, and thanks for the comment!

I think the places that could use this aren't really the folks running out of rack space, it's the folks who have no rack space at all. IBM's “Office Enablement Kit” hints at that scenario, as does the ability to use 110V in the US. It's sweet spot would be a branch offices where

1) There's no IT infrastructure on-site, but

2) “Corporate IT” still requires server equipment to adhere to their standards.

To Howard's point, discrete servers will probably have a lower price tag, although in that 'branch office' scenario, pricing probably isn't the sole concern.

Intel's “Modular Server” blades also have a shared SAS drive storage feature like IBM's.

Yea, I like IBM blades because they offer REALLY wide compatibility options across REALLY huge amount of devices. Thanks for updated section, I never knew such rack for S chassis. Must be ideal for small companies.

One thing about storage. I feel that 4 drives could be a bit short for 6×8=48 cores in terms of IO. Of course, it depends, but as I said before (having such budget) about taking real SAN and put some 2.5″ (less power hunger) 16x70GB SAS 15K drives into it. 70GB 15K SAS is very cheap these days (even new ones) and gives you much more spindles and hence IO performance (which is critical for virtual environments). And add some 500GB SATA for storage that do not need high IO performance.

I feel that 48 cores would crunch those 4 SATA disks (or nearline SAS, which is basicly the same) if running on full potential.

Pingback: veeam

Pingback: myndkrime

Pingback: Vsphere in a box con IBM « www.vuemuer.it

Pingback: IBM Redundant Power Supply Reviews

Pingback: IBM Redundant Power Supply Compare Prices

Pingback: IBM 2900 Watt Redundant Power Supply Heat Generation

Pingback: IBM 2900 Watt Redundant Power Supply Reviews

320 IOPS isn't a lot of performance :(

Pingback: Intel Redundant Power Supply - 600 W Reviews

Pingback: IBM power supply - hot-plug Reviews

Pingback: IBM RAID Controller Battery Noise Level

Pingback: Intel SAS Controller Module with Integrated RAID Reviews

Pingback: Eduard Bulai

In the figure “IBM BladeCenter S I/O Architecture” you have connected storage with I/O bay 3 and 4… it’s by design or i can connect iscsi storage to bay 1 and 2 ?

Pingback: Kevin Houston