VMworld 2018 opened with Dell EMC revealing the PowerEdge MX architecture to the public. The show floor included the full chassis along with a plexiglass version providing a behind the scenes view into the guts of the MX7000 chassis. I was able to grab a few pictures, but they don’t do it justice. I encourage you to stop by the Dell EMC booth (next to AMD and in front of the basketball court) and see it if you are attending VMworld.

PowerEdge MX7000 Chassis

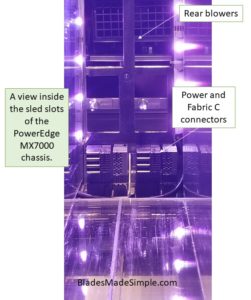

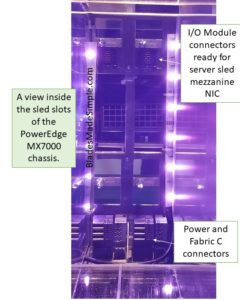

The plexiglass version allowed for full view of the PowerEdge MX7000 chassis. The Dell EMC team added colored LEDs to help showcase and draw attention to the chassis, so I apologize in advance for the images. Unfortunately you will not be able to order the PowerEdge MX7000 chassis with colored lights or in a plexiglass shell (it was asked several times.)

As you look down one of the server slots, you can see the I/O module connector from Fabric A2 in the rear of the chassis ready to accept the server sled’s mezzanine NIC (more on this below.)

In the center of the image, you can see one of the chassis’ rear blowers positioned to cool the server sleds without passing heat over the I/O modules.

On the bottom of the image you can also see the power and Fabric C connectors.

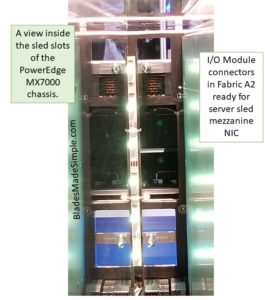

A closer image showing the lower connectors. Click on any image to enlarge.

A closer image showing the lower connectors. Click on any image to enlarge.

Another view of the server sled. At the top of this image are two open holes. These are where the I/O module in Fabric A1 would connect through. If you look closely at the second set of square holes at the top of the image you’ll see the I/O module docked in A2 and the connectors ready to accept the mezzanine NIC from a server sled.

Another view of the server sled. At the top of this image are two open holes. These are where the I/O module in Fabric A1 would connect through. If you look closely at the second set of square holes at the top of the image you’ll see the I/O module docked in A2 and the connectors ready to accept the mezzanine NIC from a server sled.

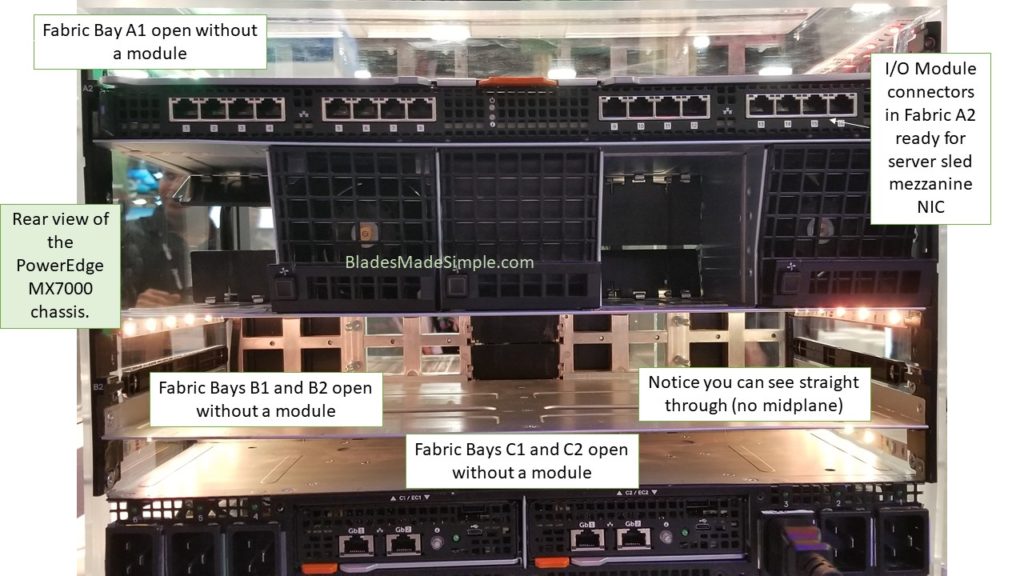

Rear view of the PowerEdge MX7000 chassis. As noted in the image, you can see several things. Most important is the ability to see straight through to the front in the section beneath the blowers. This would be where I/O modules would go into Fabrics B1 and B2 and dock with mezzanine NICs on each server sled. Since there are no I/O modules or server sleds, you can easily see all the way through.

Rear view of the PowerEdge MX7000 chassis. As noted in the image, you can see several things. Most important is the ability to see straight through to the front in the section beneath the blowers. This would be where I/O modules would go into Fabrics B1 and B2 and dock with mezzanine NICs on each server sled. Since there are no I/O modules or server sleds, you can easily see all the way through.

The earlier image showed a blower missing on the left side. In this image, I zoomed in to show the mechanism that prevents air flow in the event a blower is removed. This helps maintain consistent airflow with or without a blower present.

The earlier image showed a blower missing on the left side. In this image, I zoomed in to show the mechanism that prevents air flow in the event a blower is removed. This helps maintain consistent airflow with or without a blower present.

Server and Storage Sleds

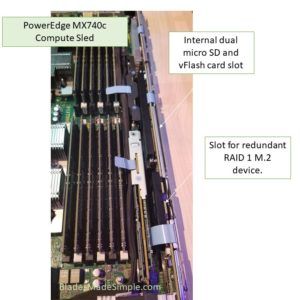

The PowerEdge MX740c is the 2 processor, 24 DIMM blade server (aka server node) of the MX architecture. This image provides an overview of the server. Note the mezzanine NIC at the bottom of the image.

The PowerEdge MX740c is the 2 processor, 24 DIMM blade server (aka server node) of the MX architecture. This image provides an overview of the server. Note the mezzanine NIC at the bottom of the image.

A closer view of the rear of the MX740c shows the mezzanine NIC in addition to the 2nd mezzanine slot which is un-populated.

A closer view of the rear of the MX740c shows the mezzanine NIC in addition to the 2nd mezzanine slot which is un-populated.

Each server has a few extra options that can be added: a redundant microSD card + vFlash option and/or a redundant RAID 1 M.2 SSD option. These optional add-ons can be found on the right side of the blade server.

Each server has a few extra options that can be added: a redundant microSD card + vFlash option and/or a redundant RAID 1 M.2 SSD option. These optional add-ons can be found on the right side of the blade server.

The redundant RAID M.2 device removed. There is a M.2 SSD on each side of the card.

The redundant RAID M.2 device removed. There is a M.2 SSD on each side of the card.

The PowerEdge MX840c includes 4 CPUs, 48 DIMMs, 4 mezzanine card slots and 2 storage slots (HBA or PERC).

The PowerEdge MX840c includes 4 CPUs, 48 DIMMs, 4 mezzanine card slots and 2 storage slots (HBA or PERC).

The MX840c is a double-width server sled. CPUs 1 and 2 are on the bottom of this image with CPUs 3 and 4 being on top. The bottom section is accessed via a handle that lifts off the CPU3/4 board.

The MX840c is a double-width server sled. CPUs 1 and 2 are on the bottom of this image with CPUs 3 and 4 being on top. The bottom section is accessed via a handle that lifts off the CPU3/4 board.

This image also shows the connectors where mezzanine NICs would be installed and connected to I/O modules.

The PowerEdge MX5116s provides storage to server sleds with 16 hot-pluggable slots supporting SAS HDD or SSDs. The sled is designed to slide out for serviceability without drives losing connectivity.

The PowerEdge MX5116s provides storage to server sleds with 16 hot-pluggable slots supporting SAS HDD or SSDs. The sled is designed to slide out for serviceability without drives losing connectivity.

Networking

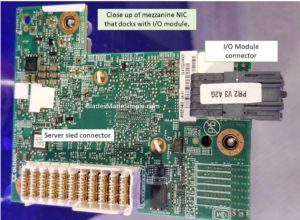

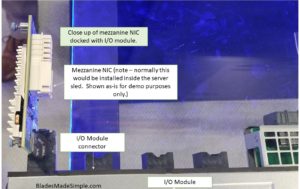

Mentioned several times above, the mezzanine NIC is a key part of the PowerEdge MX networking architecture. It provides connectors that dock directly to the I/O module while being a removable card that is easily serviced or upgraded in the future.

Mentioned several times above, the mezzanine NIC is a key part of the PowerEdge MX networking architecture. It provides connectors that dock directly to the I/O module while being a removable card that is easily serviced or upgraded in the future.

This image demonstrates how the mezzanine NIC above docks with the I/O module. This point-to-point connection removes the need for a chassis midplane and offers easy future upgrades and new technologies, like Gen-Z, become available in the future.

This image demonstrates how the mezzanine NIC above docks with the I/O module. This point-to-point connection removes the need for a chassis midplane and offers easy future upgrades and new technologies, like Gen-Z, become available in the future.

The PowerEdge MX9116n Fabric Switch Engine’s rear view showing dedicated connectors for each server sled slot in the PowerEdge MX7000 chassis.

The PowerEdge MX9116n Fabric Switch Engine’s rear view showing dedicated connectors for each server sled slot in the PowerEdge MX7000 chassis.

Hopefully these images will help expand your understanding how the PowerEdge MX architecture works. I could have used the marketing photos, but chose to show you the actual product demos from the VMworld show floor. If you have any questions about any of the above, hit me up on twitter (@kevin_houston) or email me at kevin AT bladesmadesimple.com. Thanks for reading!

Kevin Houston is the founder and Editor-in-Chief of BladesMadeSimple.com. He has 20 years of experience in the x86 server marketplace. Since 1997 Kevin has worked at several resellers in the Atlanta area, and has a vast array of competitive x86 server knowledge and certifications as well as an in-depth understanding of VMware and Citrix virtualization. Kevin has worked at Dell EMC since August 2011 working as a Server Sales Engineer covering the Global Enterprise market from 2011 to 2017 and currently works as a Chief Technical Server Architect supporting the Central Region.

Kevin Houston is the founder and Editor-in-Chief of BladesMadeSimple.com. He has 20 years of experience in the x86 server marketplace. Since 1997 Kevin has worked at several resellers in the Atlanta area, and has a vast array of competitive x86 server knowledge and certifications as well as an in-depth understanding of VMware and Citrix virtualization. Kevin has worked at Dell EMC since August 2011 working as a Server Sales Engineer covering the Global Enterprise market from 2011 to 2017 and currently works as a Chief Technical Server Architect supporting the Central Region.

Pingback: Blade Server Comparison – September 2018 » Blades Made Simple

Pingback: Dell EMC Launches Something New, But Don’t Refer to It as AI » Blades Made Simple