IMPORTANT NOTE – I updated this blog post on Feb. 28, 2011 with better details. To view the updated blog post, please go to:

https://bladesmadesimple.com/2011/02/4-socket-blade-servers-density-vendor-comparison-2011/

Original Post (March 10, 2010):

As the Intel Nehalem EX processor is a couple of weeks away, I wonder what impact it will have in the blade server market. I’ve been talking about IBM’s HX5 blade server for several months now, so it is very clear that the blade server vendors will be developing blades that will have some iteration of the Xeon 7500 processor. In fact, I’ve had several people confirm on Twitter that HP, Dell and even Cisco will be offering a 4 socket blade after Intel officially announces it on March 30. For today’s post, I wanted to take a look at how the 4 socket blade space will impact the overall capacity of a blade server environment. NOTE: this is purely speculation, I have no definitive information from any of these vendors that is not already public.

The Cisco UCS 5108 chassis holds 8 “half-width” B-200 blade servers or 4 “full-width” B-250 blade servers, so when we guess at what design Cisco will use for a 4 socket Intel Xeon 7500 (Nehalem EX) architecture, I have to place my bet on the full-width form factor. Why? Simply because there is more real estate. The Cisco B250 M1 blade server is known for its large memory capacity, however Cisco could sacrifice some of that extra memory space for a 4 socket, “Cisco B350“ blade. This would provide a bit of an issue for customers wanting to implement a complete rack full of these servers, as it would only allow for a total of 28 servers in a 42U rack (7 chassis x 4 servers per chassis.)

The Cisco UCS 5108 chassis holds 8 “half-width” B-200 blade servers or 4 “full-width” B-250 blade servers, so when we guess at what design Cisco will use for a 4 socket Intel Xeon 7500 (Nehalem EX) architecture, I have to place my bet on the full-width form factor. Why? Simply because there is more real estate. The Cisco B250 M1 blade server is known for its large memory capacity, however Cisco could sacrifice some of that extra memory space for a 4 socket, “Cisco B350“ blade. This would provide a bit of an issue for customers wanting to implement a complete rack full of these servers, as it would only allow for a total of 28 servers in a 42U rack (7 chassis x 4 servers per chassis.)

On the other hand, Cisco is in a unique position in that their half-width form factor also has extra real estate because they don’t have 2 daughter card slots like their competitors. Perhaps Cisco would create a half-width blade with 4 CPUs (a B300?) With a 42U rack, and using a half-width design, you would be able to get a maximum of 56 blade servers (7 chassis x 8 servers per chassis.)

Dell

The 10U M1000e chassis from Dell can currently handle 16 “half-height” blade servers or 8 “full height” blade servers. I don’t forsee any way that Dell would be able to put 4 CPUs into a half-height blade. There just isn’t enough room. To do this, they would have to sacrifice something, like memory slots or a daughter card expansion slot, which just doesn’t seem like it is worth it. Therefore, I predict that Dell’s 4 socket blade will be a full-height blade server, probably named a PowerEdge M910. With this assumption, you would be able to get 32 blade servers in a 42u rack (4 chassis x 8 blades.)

HP

HP

Similar to Dell, HP’s 10U BladeSystem c7000 chassis can currently handle 16 “half-height” blade servers or 8 “full height” blade servers. I don’t forsee any way that HP would be able to put 4 CPUs into a half-height blade. There just isn’t enough room. To do this, they would have to sacrifice something, like memory slots or a daughter card expansion slot, which just doesn’t seem like it is worth it. Therefore, I predict that HP’s 4 socket blade will be a full-height blade server, probably named a Proliant BL680 G7 (yes, they’ll skip G6.) With this assumption, you would be able to get 32 blade servers in a 42u rack (4 chassis x 8 blades.)

IBM

Finally, IBM’s 9U BladeCenter H chassis offers up 14 servers. IBM has one size server, called a “single wide.” IBM will also have the ability to combine servers together to form a “double-wide”, which is what is needed for the newly announced IBM BladeCenter HX5. A double-width blade server reduces the IBM BladeCenter’s capacity to 7 servers per chassis. This means that you would be able to put 28 x 4 socket IBM HX5 blade servers into a 42u rack (4 chassis x 7 servers each.)

Summary

In a tie for 1st place, at 32 blade servers in a 42u rack, Dell and HP would have the most blade server density based on their existing full-height blade server design. IBM and Cisco would come in at 3rd place with 28 blade servers in a 42u rack.. However IF Cisco (or HP and Dell for that matter) were able to magically re-design their half-height servers to hold 4 CPUs, then they would be able to take 1st place for blade density with 56 servers.

Yes, I know that there are slim chances that anyone would fill up a rack with 4 socket servers, however I thought this would be good comparison to make. What are your thoughts? Let me know in the comments below.

Pingback: Kevin Houston

Pingback: Emulex Links

With the HP BL2x220c which is 2 x 4 core CPUs per node (2 nodes) in a single half-height slot (so that is 4 CPUs in a half height slot). I wonder if they could put a single blade with 4 CPUs in the same space

Pingback: unix player

The 2×220 does do 4 proc's, but to ensure the c7000 power structure is not overloaded the server doesn't utilize the highest performance processors, has less RAM, only 1 HD per node, and does all this to ensure that the c7000 power doesn't blow…

Because of this I think when we look at the power envelope of the Nehalem-EX, and the memory it will want to eat we will see that building a half height blade with 4 processors may become a problem for the power capabilities of the chassis…

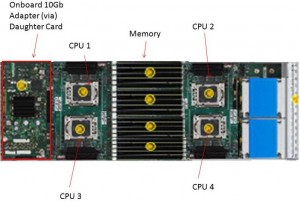

If I were doing the layout for the Cisco 4-socket b300 blade, I'd put put the memory between two pairs of processors, rather than grouping four processors together as your mockup predicts, it would make shorten the memory traces. Other than that minor nit, good article.

A 4 socket Cisco “B300” blade would appear to function better with the memory in the middle. I've updated my post to reflect this new image. I appreciate the suggestion. Thanks for reading!

Great point, Adam. I think all of the blade vendors may run into this problem trying to squeeze 4 CPUs into a half-size blade. Thanks for reading!

Adam I think you are probably right regarding power/memory of new EX, but i wonder if they could shoehorn it in ;-)

Pingback: Heather Richardson

you forgot the big memory buffers you need for EX, not gonna to happen in a half width blade IMO. Dell's going to have some pretty unique innovation in their EX design that is going to surprise you

#dell and “innovation” have not been used together in many sentences over the past few years, so I look forward to seeing what they have in store. Thanks for the comment!

Hi kevin (i work at dell) and enjoy reading your blog. We dont talk about our innovation enough in the press, real innovation that helps customers. i'd be happy to talk to you about it if you want. our EX launch is going to be a big proof point of this and it will really contrast our approach to solving real world customer problems in a much more practical way than (for example) IBM's approach. there are many other examples of shipping today in our blades. take care..

We are a major blade shop with about 20 chassis.

We have pre-production M910s in house under evaluation at this time. ( I will not steal Dell's thunder on what they look like.) We have loaded Oracle, VMware, Linux, Windows and are testing various applications. So far, so good. We are in the process of our next round of capacity expansion and expect to replace all of our production systems with the EX line.

Here is my take on EX. It will address 512 to 1024 GB of RAM, so the primary focus of the EX will be to increase density in existing deployments or in new consolidation efforts while giving databases more cache.

For us, we will be able to increase our density of VMs or databases five-fold over the current 96GB RAM half-height system – with the impact being felt in our dev/test environments. In production, we will be able to deal with increased workloads by consolidating hoggy apps onto VMs from standalone systems and increasing the cache on our Oracle databases.

The limit we will run into will be NIC bottlenecking due to the movement from 10-15 active VMs to 50-70 active VMs per blade – for both server to server communications and our iSCSI SAN. We are not sure where the sweet spot is – we expect this will be a bigger impact in production under load than our other environments. We plan to test this once we get our hands on a production M910.

Dell and others will have to move towards the next step on network bandwidth at some point.

You mention NIC bottlenecking due to the movement from 10-15 active VMs to 50-70 active VMs per blade – for both server to server communications and our iSCSI SAN. Are you using 10Gb Ethernet connections from the Dell blades to the network? I'm always interested in learning about real usages of the blade servers. Thanks for reading!

I'd love to learn more about #dell 's upcoming Nehalem EX innovation. Reach out to me – you can find my contact information on the site, or Direct Message me on Twitter (@Kevin_Houston). Thanks for reading!

Good comparison Kevin. The next step would be to compare memory DIMM count as well as CPU count, since the key to really using a 4-socket system is to have sufficient RAM.

RAM is important, but so is enough redundant I/O bandwidth, and that's often where 4-socket blades fall short.

you make a great point that absolutely needs to be looked at in more detail. we published a paper with Intel on how a plain old 10Gb controller using standard VMWare tools is ideal for LAN consolidation and even LAN/SAN consolidation with iSCSI. And you dont need a Flex10 or IBM VFA to do it, VMWare has all the QoS/rate limiting (if you need it – most environments dont even need that level of complexity today) and vLAN capabilities already so you can keep it simple. that paper is posted here:

http://download.intel.com/support/network/sb/10…

brad hedlund from Cisco in his blog posted a similar view but naturally advocated use of Nexus 5K/1K QoS features to achieve a similar goal.

to your point though, EX does enable much higher levels of consolidation, so our next project will be to explore that scenario in more detail.

Nik,

Dell 4S blades (others have a bit more limitations in redundancy, but can support pretty large IO expansion) have the capability to support 2 pairs of mezz cards (e.g. 2 x 2 port 10Gb + 2 x 2 port FC or 4 x 2 port 10Gb) in addition to 2 pairs of dual port 1Gb. So lots of IO capability and full redundancy.

Pingback: 〓まさゆきおおつか

To remove the IO bottleneck you may want to lookinto using infiniband for you interblade communications we have been testing a pair of Xsigo directors for the past 4 months and our vmotion speeds have been around 900 MB/s (yes Big B) with a Dell M1000E and HP C7000 for blades as well as about 20 other servers (rack not blade) and comparing this to FCoE performance (sorry not iSCSI)

cheers!

The devices like this is related to computers and memory, so these kinds of chassis are somehow expensive and must be store in a good quality server racks for it to be free from possible harm.

Does HP have a 4 socket intel 7500 blade out yet? Is it going to be double wide like the itanium blade?

If possible please mail me ALL Blade Servers Details with configuration on below address :-

amit.lokare@homail.com

Pingback: Thomas Bendler

Pingback: Antonio Lepore

Pingback: Blades Made Simple™ » Blog Archive » BladesMadeSimple – Year in Review