What if you could run a graphics intensive application, like CAD from Chicago, and you were sitting in Atlanta? What if you could work on a multi-million dollar animated movie feature from the luxury of your home? These and more could be possible with the HP WS460c G6 Workstation Blade. Continue reading

Author Archives: Kevin Houston

Yet Another Win for HP Blades, but Why?

I heard a rumour on Friday that HP has been chosen by another animated movie studio to provide the blade servers to render an upcoming movie. To recount the movies that have used / are using HP blades: Continue reading

It's A Bird, It's a Plane, it's Superdome 2 – ON A BLADE SERVER

Wow. HP continues its “blade everything” campaign from 2007 with a new offering today – HP Integrity Superdome 2 on blade servers. Touting a message of a “Mission-Critical Converged Infrastructure” HP is combining the mission critical Superdome 2 architecture with the successful scalable blade architecture. Continue reading

Wow. HP continues its “blade everything” campaign from 2007 with a new offering today – HP Integrity Superdome 2 on blade servers. Touting a message of a “Mission-Critical Converged Infrastructure” HP is combining the mission critical Superdome 2 architecture with the successful scalable blade architecture. Continue reading

Will LIGHT Replace Cables in Blade Servers?

Part of the Technology Behind Lightfleet's Optical Interconnect Technology (courtesy Lightfleet.com)

CNET.com recently reported that for the past 7 years, a company called Lightfleet has been working on a way to replace the cabling and switches used in blade environments with light, and in fact has already delivered a prototype to Microsoft Labs. Continue reading

Behind the Scenes at the InfoWorld Blade Shoot-Out

Daniel Bowers, at HP, recently posted some behind the scenes info on what HP used for the InfoWorld Blade Shoot-Out that I posted on a few weeks ago (check it out here) which led to HP’s blade design taking 1st place. According to Daniel, HP’s final config consisted of:

- a c7000 enclosure

- 4 ProLiant BL460c G6 server blades (loaded with VMWare ESX) built with 6-core Xeon processors and 8GB LVDIMMs

- 2 additional BL460c G6’s with StorageWorks SB40c storage blades for shared storage

- a Virtual Connect Flex-10 module

- a 4Gb fibre switch

Jump over to Daniel’s HP blog at to read the full article including how HP almost didn’t compete in the Shoot-Out and how they controlled another c7000 enclosure 3,000 miles away (Hawaii to Houston).

Jump over to Daniel’s HP blog at to read the full article including how HP almost didn’t compete in the Shoot-Out and how they controlled another c7000 enclosure 3,000 miles away (Hawaii to Houston).

Daniel’s HP blog is located at http://www.communities.hp.com/online/blogs/eyeonblades/archive/2010/04/13/behind-the-scenes-at-the-infoworld-blade-shoot-out.aspx

IBM Announces New Blade Servers with POWER7 (UPDATED)

UPDATED 4/14/2010 –  IBM announced today their newest blade server using the POWER7 processor. The BladeCenter PS700, PS701 and PS702 servers are IBM’s latest addition to the blade server family, behind last month’s announcement of the BladeCenter HX5 server, based on the Nehalem EX processor. The POWER7 processor-based PS700, PS701 and PS702 blades support AIX, IBM i, and Linux operating systems. (For Windows operations systems, stick with the HS22 or the HX5.) For those of you not familiar with the POWER processor, the POWER7 processor is a 64-bit, 4 core with 256KB L2 cache per core and 4MB L3 cache per core. Today’s announcement reflects IBM’s new naming schema as well. Instead of being labled “JS” blades like in the past, the new POWER family blade servers will be titled “PS” – for Power Systems. Finally – a naming schema that makes sense. (Will someone explain what IBM’s “LS” blades stand for??) Included in today’s announcement are the PS700, PS701 and PS702 blade. Let’s review each.

IBM announced today their newest blade server using the POWER7 processor. The BladeCenter PS700, PS701 and PS702 servers are IBM’s latest addition to the blade server family, behind last month’s announcement of the BladeCenter HX5 server, based on the Nehalem EX processor. The POWER7 processor-based PS700, PS701 and PS702 blades support AIX, IBM i, and Linux operating systems. (For Windows operations systems, stick with the HS22 or the HX5.) For those of you not familiar with the POWER processor, the POWER7 processor is a 64-bit, 4 core with 256KB L2 cache per core and 4MB L3 cache per core. Today’s announcement reflects IBM’s new naming schema as well. Instead of being labled “JS” blades like in the past, the new POWER family blade servers will be titled “PS” – for Power Systems. Finally – a naming schema that makes sense. (Will someone explain what IBM’s “LS” blades stand for??) Included in today’s announcement are the PS700, PS701 and PS702 blade. Let’s review each.

IBM BladeCenter PS700

The PS700 blade server is a single socket, single wide 4-core 3.0GHz POWER7

processor-based server that has the following:

- 8 DDR3 memory slots (available memory sizes are 4GB, 1066Mhz or 8GB, 800Mhz)

- 2 onboard 1Gb Ethernet ports

- integrated SAS controller supporting RAID levels 0,1 or 10

- 2 onboard disk drives (SAS or Solid State Drives)

- one PCIe CIOv expansion card slot

- one PCIe CFFh expansion card slot

The PS700 is supported in the BladeCenter E, H, HT and S chassis. (Note, support in the BladeCenter E requires an Advanced Management Module and a minimum of two 2000 watt power supplies.)

IBM BladeCenter PS701

The PS701 blade server is a single socket, single wide 8-core 3.0GHz POWER7

processor-based server that has the following:

- 16 DDR3 memory slots (available memory sizes are 4GB, 1066Mhz or 8GB, 800Mhz)

- 2 onboard 1Gb Ethernet ports

- integrated SAS controller supporting RAID levels 0,1 or 10

- 2 1 onboard disk drive (SAS or Solid State Drives)

- one PCIe CIOv expansion card slot

- one PCIe CFFh expansion card slot

The PS701 is supported in the BladeCenter H, HT and S chassis only.

IBM BladeCenter PS702

The PS702 blade server is a dual socket, double-wide 16–core (via 2 x 8-core CPUs) 3.0GHz POWER7 processor-based server that has the following:

- 32 DDR3 memory slots (available memory sizes are 4GB, 1066Mhz or 8GB, 800Mhz)

- 4 onboard 1Gb Ethernet ports

- integrated SAS controller supporting RAID levels 0,1 or 10

- 2 onboard disk drives (SAS or Solid State Drives)

- 2 PCIe CIOv expansion card slots

- 2 PCIe CFFh expansion card slots

The PS702 is supported in the BladeCenter H, HT and S chassis only.

For more technical details on the PS blade servers, please visit IBM’s redbook page at: http://www.redbooks.ibm.com/redpieces/abstracts/redp4655.html?Open

Rumour? New 8 Port Cisco Fabric Extender for UCS

I recently heard a rumour that Cisco was coming out with an 8 port Fabric Extender (FEX) for the UCS 5108, so I thought I’d take some time to see what this would look like. NOTE: this is purely speculation, I have no definitive information from Cisco so this may be false info.

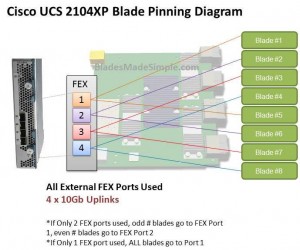

Before we discuss the 8 port FEX, let’s take a look at the 4 port UCS 2140XP FEX and how the blade servers connect, or “pin” to them. The diagram below shows a single FEX. A single UCS 2140XP FEX has 4 x 10Gb uplinks to the 6100 Fabric Interconnect Module. The UCS 5108 chassis has 2 FEX per chassis, so each server would have a 10Gb connection per FEX. However, as you can see, the server shares that 10Gb connection with another blade server. I’m not an I/O guy, so I can’t say whether or not having 2 servers connect to the same 10Gb uplink port would cause problems, but simple logic would tell me that two items competing for the same resource “could” cause contention. If you decide to only connect 2 of the 4 external FEX ports, then you have all of the “odd #” blade servers connecting to port 1 and all of the “even # blades” connecting to port 2. Now you are looking at a 4 servers contending for 1 uplink port. Of course, if you only connect 1 external uplink, then you are looking at all 8 servers using 1 uplink port.

Before we discuss the 8 port FEX, let’s take a look at the 4 port UCS 2140XP FEX and how the blade servers connect, or “pin” to them. The diagram below shows a single FEX. A single UCS 2140XP FEX has 4 x 10Gb uplinks to the 6100 Fabric Interconnect Module. The UCS 5108 chassis has 2 FEX per chassis, so each server would have a 10Gb connection per FEX. However, as you can see, the server shares that 10Gb connection with another blade server. I’m not an I/O guy, so I can’t say whether or not having 2 servers connect to the same 10Gb uplink port would cause problems, but simple logic would tell me that two items competing for the same resource “could” cause contention. If you decide to only connect 2 of the 4 external FEX ports, then you have all of the “odd #” blade servers connecting to port 1 and all of the “even # blades” connecting to port 2. Now you are looking at a 4 servers contending for 1 uplink port. Of course, if you only connect 1 external uplink, then you are looking at all 8 servers using 1 uplink port.

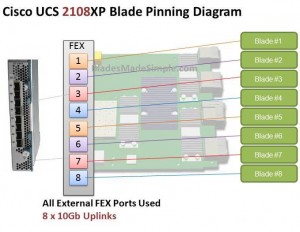

Introducing the 8 Port Fabric Extender (FEX)

I’ve looked around and can’t confirm if this product is really coming or not, but I’ve heard a rumour that there is going to be an 8 port version of the UCS 2100 series Fabric Extender. I’d imagine it would be the UCS 2180XP Fabric Extender and the diagram below shows what I picture it would look like. The biggest advantage I see of this design would be that each server would have a dedicated uplink port to the Fabric Interconnect. That being said, if the existing 20 and 40 port Fabric Interconnects remain, this 8 port FEX design would quickly eat up the available ports on the Fabric Interconnect switches since the FEX ports directly connect to the Fabric Interconnect ports. So – does this mean there is also a larger 6100 series Fabric Interconnect on the way? I don’t know, but it definitely seems possible.

The biggest advantage I see of this design would be that each server would have a dedicated uplink port to the Fabric Interconnect. That being said, if the existing 20 and 40 port Fabric Interconnects remain, this 8 port FEX design would quickly eat up the available ports on the Fabric Interconnect switches since the FEX ports directly connect to the Fabric Interconnect ports. So – does this mean there is also a larger 6100 series Fabric Interconnect on the way? I don’t know, but it definitely seems possible.

What do you think of this rumoured new offering? Does having a 1:1 blade server to uplink port matter or is this just more

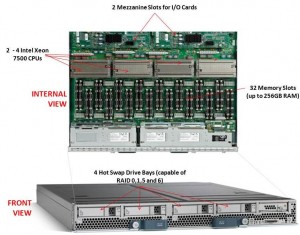

New Cisco Blade Server: B440-M1

Cisco recently announced their first blade offering with the Intel Xeon 7500 processor, known as the “Cisco UCS B440-M1 High-Performance Blade Server.” This new blade is a full-width blade that offers 2 – 4 Xeon 7500 processors and 32 memory slots, for up to 256GB RAM, as well as 4 hot-swap drive bays. Since the server is a full-width blade, it will have the capability to handle 2 dual-port mezzanine cards for up to 40 Gbps I/O per blade.

Cisco recently announced their first blade offering with the Intel Xeon 7500 processor, known as the “Cisco UCS B440-M1 High-Performance Blade Server.” This new blade is a full-width blade that offers 2 – 4 Xeon 7500 processors and 32 memory slots, for up to 256GB RAM, as well as 4 hot-swap drive bays. Since the server is a full-width blade, it will have the capability to handle 2 dual-port mezzanine cards for up to 40 Gbps I/O per blade.

Each Cisco UCS 5108 Blade Server Chassis can house up to four B440 M1 servers (maximum 160 per Unified Computing System).

How Does It Compare to the Competition?

Since I like to talk about all of the major blade server vendors, I thought I’d take a look at how the new Cisco B440 M1 compares to IBM and Dell. (HP has not yet announced their Intel Xeon 7500 offering.)

Processor Offering

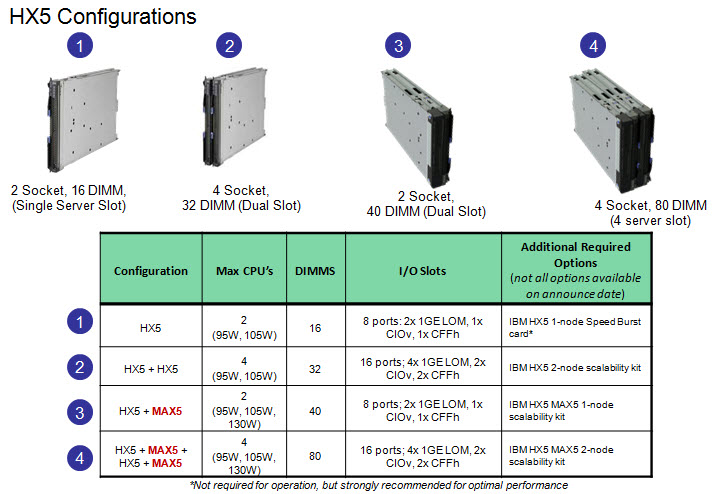

Both Cisco and Dell offer models with 2 – 4 Xeon 7500 CPUs as standard. They each have variations on speeds – Dell has 9 processor speed offerings; Cisco hasn’t released their speeds and IBM’s BladeCenter HX5 blade server will have 5 processor speed offerings initially. With all 3 vendors’ blades, however, IBM’s blade server is the only one that is designed to scale from 2 CPUs to 4 CPUs by connecting 2 x HX5 blade servers. Along with this comes their “FlexNode” technology that enables users to have the 4 processor blade system to split back into 2 x 2 processor systems at specific points during the day. Although not announced, and purely my speculation, IBM’s design also leads to a possible future capability of connecting 4 x 2 processor HX5’s for an 8-way design. Since each of the vendors offer up to 4 x Xeon 7500’s, I’m going to give the advantage in this category to IBM. WINNER: IBM

Memory Capacity

Both IBM and Cisco are offering 32 DIMM slots with their blade solutions, however they are not certifying the use of 16GB DIMMs – only 4GB and 8GB DIMMs, therefore their offering only scales to 256GB of RAM. Dell claims to offers 512GB DIMM capacity on their the PowerEdge 11G M910 blade server, however that is using 16GB DIMMs. REalistically, I think the M910 would only be used with 8GB DIMMs, so Dell’s design would equal IBM and Cisco’s. I’m not sure who has the money to buy 16GB DIMMs, but if they do – WINNER: Dell (or a TIE)

Server Density

As previously mentioned, Cisco’s B440-M1 blade server is a “full-width” blade so 4 will fit into a 6U high UCS5100 chassis. Theoretically, you could fit 7 x UCS5100 blade chassis into a rack, which would equal a total of 28 x B440-M1’s per 42U rack.Overall, Cisco’s new offering is a nice addition to their existing blade portfolio. While IBM has some interesting innovation in CPU scalability and Dell appears to have the overall advantage from a server density, Cisco leads the management front.

Dell’s PowerEdge 11G M910 blade server is a “full-height” blade, so 8 will fit into a 10u high M1000e chassis. This means that 4 x M1000e chassis would fit into a 42u rack, so 32 x Dell PowerEdge M910 blade servers should fit into a 42u rack.

IBM’s BladeCenter HX5 blade server is a single slot blade server, however to make it a 4 processor blade, it would take up 2 server slots. The BladeCenter H has 14 server slots, so that makes the IBM solution capable of holding 7 x 4 processor HX5 blade servers per chassis. Since the chassis is a 9u high chassis, you can only fit 4 into a 42u rack, therefore you would be able to fit a total of 28 IBM HX5 (4 processor) servers into a 42u rack.

WINNER: Dell

Management

The final category I’ll look at is the management. Both Dell and IBM have management controllers built into their chassis, so management of a lot of chassis as described above in the maximum server / rack scenarios could add some additional burden. Cisco’s design, however, allows for the management to be performed through the UCS 6100 Fabric Interconnect modules. In fact, up to 40 chassis could be managed by 1 pair of 6100’s. There are additional features this design offers, but for the sake of this discussion, I’m calling WINNER: Cisco.

Cisco’s UCS B440 M1 is expected to ship in the June time frame. Pricing is not yet available. For more information, please visit Cisco’s UCS web site at http://www.cisco.com/en/US/products/ps10921/index.html.

Technical Details on the IBM HX5 Blade Server (UPDATED)

(Updated 4/22/2010 at 2:44 p.m.)

(Updated 4/22/2010 at 2:44 p.m.)

IBM officially announced the HX5 on Tuesday, so I’m going to take the liberty to dig a little deeper in providing details on the blade server. I previously provided a high-level overview of the blade server on this post, so now I want to get a little more technical, courtesy of IBM. It is my understanding that the “general availability” of this server will be in the mid-June time frame, however that is subject to change without notice.

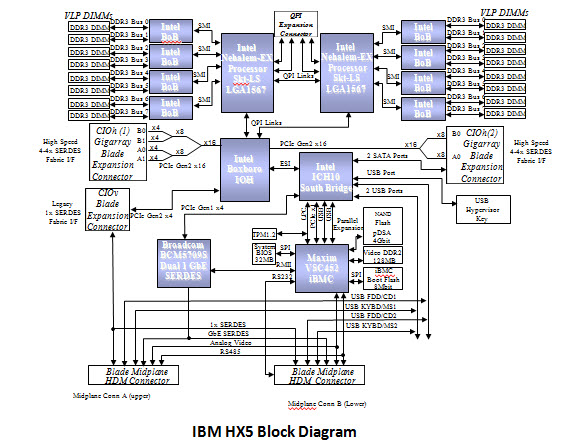

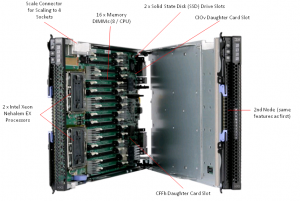

Block Diagram

Below is the details of the actual block diagram of the HX5. There’s no secrets here, as they’re using the Intel Xeon 6500 and 7500 chipsets that I blogged about previously.

As previously mentioned, the value that the IBM HX5 blade server brings is scalability. A user has the ability to buy a single blade server with 2 CPUs and 16 DIMMs, then expand it to 40 DIMMs with a 24 DIMM MAX 5 memory blade. OR, in the near future, a user could combine 2 x HX5 servers to make a 4 CPU server with 32 DIMMs, or add a MAX5 memory DIMM to each server and have a 4 CPU server with 80 DIMMs.

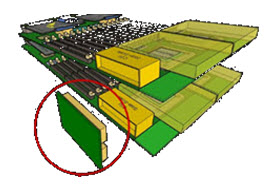

The diagrams below provide a more technical view of the the HX5 + MAX5 configs. Note, the “sideplanes” referenced below are actualy the “scale connector“.  As a reminder, this connector will physically connect 2 HX5 servers on the tops of the servers, allowing the internal communications to extend to each others nodes. The easiest way to think of this is like a Lego . It will allow a HX5 or a MAX5 to be connected together. There will be a 2 connector, a 3 connector and a 4 connector offering.

As a reminder, this connector will physically connect 2 HX5 servers on the tops of the servers, allowing the internal communications to extend to each others nodes. The easiest way to think of this is like a Lego . It will allow a HX5 or a MAX5 to be connected together. There will be a 2 connector, a 3 connector and a 4 connector offering.

(Updated) Since the original posting, IBM released the “eX5 Porfolio Technical Overview: IBM System x3850 X5 and IBM BladeCenter HX5” so I encourage you to go download it and give it a good read. David’s Redbook team always does a great job answering all the questions you might have about an IBM server inside those documents.

If there’s something about the IBM BladeCenter HX5 you want to know about, let me know in the comments below and I’ll see what I can do.

Thanks for reading!

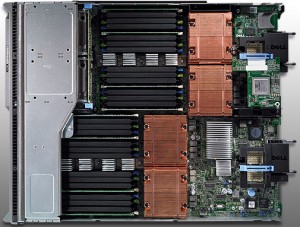

Dell M910 Blade Server – Based on the Nehalem EX

Dell appears to be first to the market today with complete details on their Nehalem EX blade server, the PowerEdge M910. Based on the Nehalem EX technology (aka Intel Xeon 7500 Chipset), the server offers quite a lot of horsepower in a small, full-height blade server footprint.

Dell appears to be first to the market today with complete details on their Nehalem EX blade server, the PowerEdge M910. Based on the Nehalem EX technology (aka Intel Xeon 7500 Chipset), the server offers quite a lot of horsepower in a small, full-height blade server footprint.

Some details about the server:

- uses Intel Xeon 7500 or 6500 CPUs

- has support for up to 512GB using 32 x 16 DIMMs

- comes standard two embedded Broadcom NetExtreme II Dual Port 5709S Gigabit Ethernet NICs with failover and load balancing.

- has two 2.5″ Hot-Swappable SAS/Solid State Drives

- 3 4 available I/O mezzanine card slots

- comes with a Matrox G200eW w/ 8MB memory standard

- can function on 2 CPUs with access to all 32 DIMM slots

Dell (finally) Offers Some Innovation

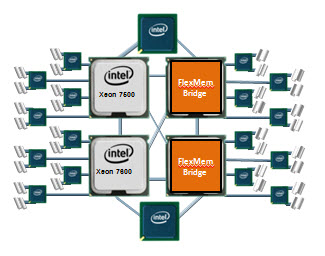

I commented a few weeks ago that Dell and innovate were rarely used in the same sentence, however with today’s announcement, I’ll have to retract that statement. Before I elaborate on what I’m referring to, let me do some quick education. The design of the Nehalem architecture allows for each processor (CPU) to have access to a dedicated bank of memory along with its own memory controller. The only downside to this is that if a CPU is not installed, the attached memory banks are not useable. THIS is where Dell is offering some innovation. Today Dell announced the “FlexMem Bridge” technology. This technology is simple in concept as it allows for the memory of a CPU socket that is not populated to still be used. In essence, Dell’s using technology that bridges the memory banks across un-populated CPU slots to the rest of the server’s populated CPUs.  With this technology, a user could start of with only 2 CPUs and still have access to 32 memory DIMMs. Then, over time, if more CPUs are needed, they simply remove the FlexMem Bridge adapters from the CPU sockets then replace with CPUs – now they would have a 4 CPU x 32 DIMM blade server.

With this technology, a user could start of with only 2 CPUs and still have access to 32 memory DIMMs. Then, over time, if more CPUs are needed, they simply remove the FlexMem Bridge adapters from the CPU sockets then replace with CPUs – now they would have a 4 CPU x 32 DIMM blade server.

Congrats to Dell. Very cool idea. The Dell PowerEdge M910 is available to order today from the Dell.com website.

Let me know what you guys think.