It occurred to me that I created a reference chart for showing what blade server options are available in the market (“Blade Server Comparison – 2018“) but I’ve never listed the options for blade server chassis. In this post, I’ll provide you with overviews of blade chassis from Cisco, Dell EMC, HPE and Lenovo. One of the things I’m not going to do is try and give Pro’s and Con’s for each chassis. The reason is quite obvious if you have read this blog before, but in a nutshell, I work for Dell EMC, so I’m not going to promote or bash any vendor. My goal is to simplify each vendor’s offerings and give you one place to get an overview of each blade chassis in the market.

Tag Archives: UCS 5108

Cisco Refreshes UCS B440 M2 and B230 M2 with Intel Xeon E7

Cisco announced today the refresh of their UCS B440 M2 and B230 blade servers with the newly announced Intel Xeon E7. Continue reading

Cisco announced today the refresh of their UCS B440 M2 and B230 blade servers with the newly announced Intel Xeon E7. Continue reading

4 Socket Blade Servers Density: Vendor Comparison (2011)

Revised with corrections 3/1/2011 10:29 a.m. (EST)

Almost a year ago, I wrote an article highlighting the 4 socket blade server offerings. At that time, the offerings were very slim, but over the past 11 months, that blog post has received the most hits, so I figured it’s time to revise the article. In today’s post, I’ll review the 4 socket Intel and AMD blade servers that are currently on the market. Yes, I know I’ll have to revise this again in a few weeks, but I’ll cross that bridge when I get to it. Continue reading

Rumour? New 8 Port Cisco Fabric Extender for UCS

I recently heard a rumour that Cisco was coming out with an 8 port Fabric Extender (FEX) for the UCS 5108, so I thought I’d take some time to see what this would look like. NOTE: this is purely speculation, I have no definitive information from Cisco so this may be false info.

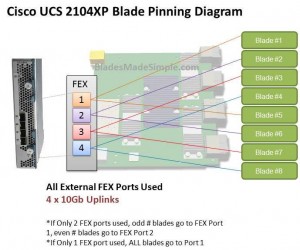

Before we discuss the 8 port FEX, let’s take a look at the 4 port UCS 2140XP FEX and how the blade servers connect, or “pin” to them. The diagram below shows a single FEX. A single UCS 2140XP FEX has 4 x 10Gb uplinks to the 6100 Fabric Interconnect Module. The UCS 5108 chassis has 2 FEX per chassis, so each server would have a 10Gb connection per FEX. However, as you can see, the server shares that 10Gb connection with another blade server. I’m not an I/O guy, so I can’t say whether or not having 2 servers connect to the same 10Gb uplink port would cause problems, but simple logic would tell me that two items competing for the same resource “could” cause contention. If you decide to only connect 2 of the 4 external FEX ports, then you have all of the “odd #” blade servers connecting to port 1 and all of the “even # blades” connecting to port 2. Now you are looking at a 4 servers contending for 1 uplink port. Of course, if you only connect 1 external uplink, then you are looking at all 8 servers using 1 uplink port.

Before we discuss the 8 port FEX, let’s take a look at the 4 port UCS 2140XP FEX and how the blade servers connect, or “pin” to them. The diagram below shows a single FEX. A single UCS 2140XP FEX has 4 x 10Gb uplinks to the 6100 Fabric Interconnect Module. The UCS 5108 chassis has 2 FEX per chassis, so each server would have a 10Gb connection per FEX. However, as you can see, the server shares that 10Gb connection with another blade server. I’m not an I/O guy, so I can’t say whether or not having 2 servers connect to the same 10Gb uplink port would cause problems, but simple logic would tell me that two items competing for the same resource “could” cause contention. If you decide to only connect 2 of the 4 external FEX ports, then you have all of the “odd #” blade servers connecting to port 1 and all of the “even # blades” connecting to port 2. Now you are looking at a 4 servers contending for 1 uplink port. Of course, if you only connect 1 external uplink, then you are looking at all 8 servers using 1 uplink port.

Introducing the 8 Port Fabric Extender (FEX)

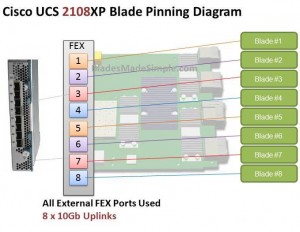

I’ve looked around and can’t confirm if this product is really coming or not, but I’ve heard a rumour that there is going to be an 8 port version of the UCS 2100 series Fabric Extender. I’d imagine it would be the UCS 2180XP Fabric Extender and the diagram below shows what I picture it would look like. The biggest advantage I see of this design would be that each server would have a dedicated uplink port to the Fabric Interconnect. That being said, if the existing 20 and 40 port Fabric Interconnects remain, this 8 port FEX design would quickly eat up the available ports on the Fabric Interconnect switches since the FEX ports directly connect to the Fabric Interconnect ports. So – does this mean there is also a larger 6100 series Fabric Interconnect on the way? I don’t know, but it definitely seems possible.

The biggest advantage I see of this design would be that each server would have a dedicated uplink port to the Fabric Interconnect. That being said, if the existing 20 and 40 port Fabric Interconnects remain, this 8 port FEX design would quickly eat up the available ports on the Fabric Interconnect switches since the FEX ports directly connect to the Fabric Interconnect ports. So – does this mean there is also a larger 6100 series Fabric Interconnect on the way? I don’t know, but it definitely seems possible.

What do you think of this rumoured new offering? Does having a 1:1 blade server to uplink port matter or is this just more

Cisco's UCS Software

eWeek recently posted snapshots of Cisco’s Unified Computing System (UCS) Software on their site: http://www.eweek.com/c/a/IT-Infrastructure/LABS-GALLERY-Cisco-UCS-Unified-Computing-System-Software-199462/?kc=rss

Take a good look at the software because the software is the reason this blade system will be successful because they are treating the physical blades as a resource – just CPUs, memory and I/O. “What” the server should be and “How” the server should act is a feature of the UCS Management software. It will show you the physical layout of the blades to the UCS 6100 Interconnect, it can show you the configurations of the blades in the attached UCS 5108 chassis, it can set the boot order of the blades, etc. Quite frankly there are too many features to mention and I don’t want to steal their fire, so take a few minutes to go to: http://www.eweek.com/c/a/IT-Infrastructure/LABS-GALLERY-Cisco-UCS-Unified-Computing-System-Software-199462/?kc=rss.