Updated 5/24/2010 – I’ve received some comments about expandability and I’ve received a correction about the speed of Dell’s memory, so I’ve updated this post. You’ll find the corrections / additions below in GREEN.

Since I’ve received a lot of comments from my post on the Dell FlexMem Bridge technology, I thought I would do an unbiased comparison between Dell’s FlexMem Bridge technology (via the PowerEdge 11G M910 blade server) vs IBM’s MAX5 + HX5 blade server offering. In summary both offerings provide the Intel Xeon 7500 CPU plus the ability to add “extended memory” offering value for virtualization, databases and any other workloads that benefit from large amounts of memory.

The Contenders

IBM

IBM’s extended memory solution is a two part solution consisting of the HX5 blade server PLUS the MAX5 memory blade.

- HX5 Blade Server

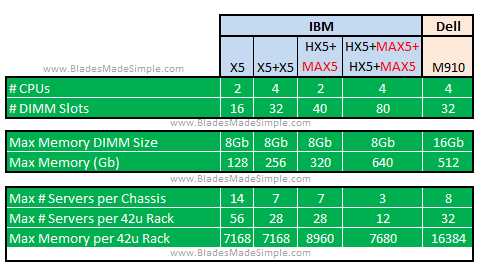

I’ve spent considerable time on previous blogs detailing the IBM HX5, so please jump over to those links to dig into the specifics, but at a high level, the HX5 is IBM’s 2 CPU blade server that offers the Intel Xeon 7500 CPU. The HX5 is a 30mm, “single wide” blade server therefore you can fit up to 14 in an IBM BladeCenter H blade chassis. - MAX5

The MAX 5 offering from IBM can be thought of as a “memory expansion blade.” Offering an additional 24 memory DIMM slots, the MAX5 when coupled with the HX5 blade server, provides a total of 40 memory DIMMs. The MAX5 is a standard “single wide”, 30mm form factor so when used with a single HX5 two IBM BladeCenter H server bays are required in the chassis.

DELL

DELL

Dell’s approach to extended memory is a bit different. Instead of relying on a memory blade, Dell starts with the M910 blade server and allows users to use 2 CPUs plus their FlexMem Bridge to access the memory DIMMs of the 3rd and 4th CPU sockets. For details on the FlexMem Bridge, check out my previous post.

- PowerEdge 11G M910 Blade Server

The M910 is a 4 CPU capable blade server with 32 memory DIMMs. This blade server is a full-height server therefore you can fit 8 servers inside the Dell M1000e blade chassis.

The Face-Off

ROUND 1 – Memory Capacity

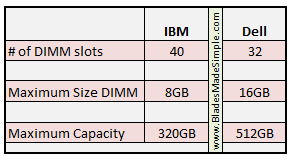

When we compare the memory DIMMs available on each, we see that Dell’s offering comes up with 32 DIMMs vs IBM’s 40 DIMMs. However, IBM’s solution of using the HX5 blade server + the MAX 5 memory expansion has a current maximum memory size is 8Gb whereas Dell offers a max memory size of 16Gb. While this may change in the future, as of today, Dell has the edge so I have to claim:

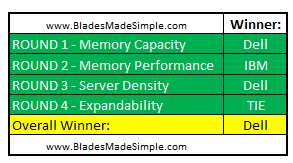

Round 1 Winner: Dell

ROUND 2 – Memory Performance

As many comments came across on my posting of the Dell FlexMem Bridge technology the other day, several people pointed out that the memory performance is something that needs to be considered when comparing technologies. Dell’s FlexMem Bridge offering reportedly runs at a maximum memory speed of 833Mhz, runs at a max of 1066Ghz, but is dependent upon the speed of the processor. A processor that has a 6.4GT QPI supports memory @ 1066Ghz ; a processor that supports 5.8GT/s QPI supports memory at 978Mhz, and a processor with a QPI speed of 4.8GT runs memory at 800Mhz. This is a component of Intel’s Xeon 7500 architecture so it should be the same regardless of the server vendor. Looking at IBM, we see the HX5 blade server memory runs at a maximum of 978Mhz. However, when you attach the MAX5 to the HX5 for the additional memory slots, however, the memory runs at speed of 1066Mhz, regardless of the speed of the CPU installed. While this appears to be black magic, it’s really the results of IBM’s proprietary eXa scaling – something that I’ll cover in detail at a later date. Although the HX5 blade server memory, when used by itself, does not have the ability to achieve 1066Ghz, this comparison is based on the Dell PowerEdge 11G M910 vs the IBM HX5+MAX5. With that in mind, the ability to run the expanded memory at 1066Mhz gives IBM the edge in this round.

Round 2 Winner: IBM

ROUND 3 – Server Density

This one is pretty straight forward. IBM’s HX5 + MAX5 offering takes up 2 server bays, so in the IBM BladeCenter H, you can only fit 7 systems. You can only fit 4 BladeCenter H chassis in a 42u rack, therefore you can fit a max of 28 IBM HX5 + MAX5 systems into a rack.

The Dell PowerEdge 11G M910 blade server is a full height server, so you can fit 8 servers into the Dell M1000e chassis. 4 Dell chassis will fit in a 42u rack, so you can get 32 Dell M910’s into a rack.

Round 3 Winner: Dell

(NEW) ROUND 4 – Expandability

It was mentioned several times in the comments that expandability should have been reviewed as well. When we look at Dell’s design, we see there two expansion options: run the Dell PowerEdge 11G M910 blade with 2 processors and the FlexMem Bridge, or run them with 4 processors and remove the FlexMem Bridge.

It was mentioned several times in the comments that expandability should have been reviewed as well. When we look at Dell’s design, we see there two expansion options: run the Dell PowerEdge 11G M910 blade with 2 processors and the FlexMem Bridge, or run them with 4 processors and remove the FlexMem Bridge.

The modular design of the IBM eX5 architecture allows for a user to add memory (MAX5), add processors (2nd HX5) or both (2 x HX5 + 2 x MAX5). This provide users with a lot of flexibility to choose a design that meets their workload.

Choosing a winner for this round is tough, as there a different ways to look at this:

Maximum CPUs in a server: TIE – both IBM and Dell can scale to 4 CPUs.

Maximum CPU density in a 42u rack: Dell wins with 32 x 4 CPU servers vs IBM’s 12.

Maximum Memory in a server: IBM with 640Gb using 2 x HX5 and 2 x MAX5

Max Memory density in a 42u Rack: Dell wins with 16Tb

Round 4 Winner: TIE

Summary

While the fight was close, with a 2 to 1 win, it is clear the overall winner is Dell. For this comparison, I tried to keep it focused on the memory aspect of the offerings.

While the fight was close, with a 2 to 1 win, it is clear the overall winner is Dell. For this comparison, I tried to keep it focused on the memory aspect of the offerings.

On a final note, at the time of this writing, the IBM MAX 5 memory expansion has not been released for general availability, while Dell is shipping their M910 blade server.

There may be other advantages relative to processors that were not considered for this comparison, however I welcome any thoughts or comments you have.

e cigarette reviews

la superior court traffic

bone marrow donation

israeli palestinian conflict

groupon seattle

Pingback: Kevin Houston

I'm not sure whether, could you please also compare the price of you second round at 320 GB on both servers? that should also be a consideration.

Moreover, While DELL could have 4 CPUs with 32 DIMMs. IBM 2 HX5 (4CPUs 32DIMMs) with 2 MAX5 (48 DIMMs) can have up to 80 DIMMs i didn't see this in any consideration ..

Even more, if you could compare price per VMs or price per SQL licenses that should be another fun game.

Kevin,

Another fantastic post. i definitely appreciate the nuetral technical comparisons you bring to the table on your posts. Your blog quickly made it to my 'must read' list after I discovered it.

Joe

Just a clarification on memory capacity.. in a 2-socket configuration, HX5 with MAX5 can actually support up to 64 DIMM slots (using 2x HX5 blades, each with a single processor, and 2x MAX5 blades). Each CPU has its 8 directly attached DIMMs plus each MAX5 adds 24 extra DIMMs. So, if max memory capacity is the primary requirement, even with 8GB DIMMs HX5 with MAX5 can match Dell's capacity. You trade off density, but you also save on not having to buy expensive 16GB DIMMs. Also, in a 4-socket configuration, Dell M910 still maxes out at 32 DIMMs, whereas HX5 with MAx5 can provide up to 80 DIMMs (2 x HX5 + 2x MAX5). So, in a 4-socket configuration, not on is the HX5 higher memory capacity using 8GB DIMMs vs. 16GB DIMMs, but as pointed out, does faster memory performance.

Pingback: Kevin Houston

Pingback: Kong Yang

#ibm MAX5 vs #dell FlexMem Bridge – you bring up a good point, however using a single CPU on the HX5 when used with the MAX5 expansion blade will require the MAX5 memory to be connected to a single QPI connection. Running 40 Memory DIMMs over a single QPI connection could lead to a bottleneck very quickly. Also, with a 2 x HX5 + 2 x MAX5 design, you'll only be able to fit 3 into a BladeSystem H. That means a max of 12 in a 42u Rack. Realistically, it would be more cost efficient to use an IBM x3850, or even a soon-to-be-released IBM x3690. Thanks for the comments and for reading.

I'd love to do a price comparison, but the #ibm MAX5 is not shipping yet. When it does I'll see if I can do a comparison in price. Thanks for the comments, and thanks for reading!

Pingback: Dennis Smith

Pingback: Scott Hanson

Pingback: ravikanth

wait for the new HP blades based on Intel Xeon 7500 CPU ,gonna be a hit

Pingback: Kevin Houston

Pingback: unix player

I hear #HP blades with Xeon 7500 won't be coming until September. That's a long time to wait. Hope they'll be adding some innovation that competes with #dell and #ibm 's extended memory offerings in the Xeon 7500 family. Thanks for the comment!

#ibm HX5 vs #dell M910 – Thanks for reading, Joe. I appreciate the kind words.

Have you actually LOOKED at 16GB DIMM prices? at over $1500 PER DIMM web price, I don't see many blades filling up with those. Much cheaper to actually just buy a second blade!

And, you can't just install a pair at a time and hope to grow, not without cutting memory I/O performance dramatically. Its all or nothing, and somehow, I don't see standard budget Dell customers willing to pay over $50k per blade….

Proteus,

Agree that you don't see many blades filling up with 16GB DIMMs, but 16GB prices in the industry are dropping substantially, you WILL see it (actually, you may not as IBM doesn't support 16GB DIMMs on HX5). if you look at IBM's site you see you/they charge $749 for an 8GB DIMM and $1500 for a 16GB DIMM, so from IBM perspective price per GB is ~ the same for 8GB & 16GB. would you say people dont fill blades up with 8GB dimms?

with IBM's memory pricing, fact that HX5 only supports expensive SSDs ($1000 a pop for the lowest cost drive??? i could almost buy an IBM 16GB dimm for that), and throw in the price of MAX5 (who knows what that will be) i think we'll be very competitive w/ M910 up to 512GB. Thanks for the concern for our “budget” customers..

Mike

#IBM MAX5 vs #dell M910 blade server memory – you bring up a good point that 16Gb DIMMS are expensive. If you looked at doing 8Gb DIMMs for both the IBM and Dell offerings (above) you would have Dell coming in at 8TB of memory per 42U Rack (256Gb x 32 systems) vs IBM's 7.6TB (640Gb x 12 systems). Dell's offering would still offer the most memory per 42u Rack, even with 8Gb DIMMs. What I DON'T know is whether that COST would be more, equal to or less than IBM because they don't have the MAX5 out yet. Once IBM releases the MAX5, then we can have a price comparison between IBM and Dell's offerings using 8GB DIMMs. Thanks for contributing to the comments – you and Mike are making this a very interesting discussion!

I would rather have a full rack with 12 CPUs and 7.5TB of RAM than a rack with 32 CPUs and 16TB of RAM. Why? More memory per core/CPU, means higher utilization levels on the CPUs, means more virtual machines on a server, means less software licensing costs, means less upfront hardware costs, means less power and cooling needed.

I don't see the point of comparing how many CPUs you can fit into a 42u rack, because the real world problem is that customers need to decrease their hardware footprint and do more with less, given the energy and management challenges that many customers face today.

I think this whole test is rather unfair, because you could include Rounds where one vendor's is clearly more beneficial given real world problems, and remove Rounds that have no importance or bearing in the real world, altering the overall result. Maybe the foundation of the test should be based on a customer scenario rather, I wonder how things would fair then?

Mike, two points.

First, as you know, few people actually pay web pricing for servers and options. There are almost always discounts. Secondly, SSDs are primarily for high IOPS, SQL or analytical workloads. Virtualization customers almost always SAN boot, or use embedded hypervisors.

And, yes, I look forward to seeing what HP brings to the table, will be nice to make this a three horse race.

#IBM can fit 12 blade servers in a rack when using 4 Procs and 640Gb of RAM. This comes out to 160Gb per CPU (per server). On the other hand, Dell can fit 32 blade servers in a rack when using 4 processors and 512Gb of RAM, or 128Gb per CPU (per server). IBM has the greater RAM per CPU in the designs, but Dell can fit more into the servers. I think I was very fair in my write-up, as it compares the features and differences between the two offerings. Based on the facts, Dell has the advantage. That being said, I agree that a customer's real work problems will vary and that their preference of server will be dependent upon what their needs are. I appreciate you reading and for the comments.

Kevin,

Thats a bit disengenuous. IBM fits 7 4 socket systems per chassis, translating to 28 blades in the “standard” 42U rack form factor, or 35 blades in a 45U rack. 256GB is plenty for the vast majority of customers. Marketing types can play games all they want, but every customer is different. Some customers could care less about space, others can only put 1-2chassis per rack due to power issues, others need maximum RAM density/rack, or maximum compute/IO density per rack. Thats why we have choices. Blades are NOT a slam dunk for everything..I know companies that refuse to touch them, but the IBM “build up/modular” solution seems to be the best all around at the moment. As lightnrythem mentions though, it all boils down to what software is running. SQL, Analytics/BI, email, HPC, Virt/Cloud all have very, very different needs, with different metrics. Sometimes, hardware cost is just a drop in the bucket. Oracle is what, $60k/socket nowadays? VMWare is another case in point. How does that Dell 910 with expensive 16GB DIMMS look now, when coupled with a 2X VMWare licensing cost? Want to bet 256GB won't be the sweet spot?

Kevin,

I think you should include another round for flexibility. IBM has a feature called FlexNode that allows you to dynamically decouple a 4 socket blade (2 HX5s slammed together), and see it as 2 2socket servers, and dynamically couple them together again to form a 4 socket server without touching the hardware. This means you can run different workloads at different times of the day – gives you flexibility over how you want to run your workloads optimally. It also acts as a high availability tool in case 1 node of a 4 socket blade fails, in which case an automatic reboot is initiated on the failed node, and the live node takes ownership of the workload until the problem is fixed.

On the Dell side, you're stuck with a monolithic 4 socket configuration and there is no flexibility to do the above.

Winner: IBM

Overall – Dell 2: IBM 2 – TIE

:)

Pingback: Kevin Houston

Pingback: Kevin Houston