I recently had some discussions with a customer looking to connect a Dell EMC PowerEdge M1000e to a Cisco Nexus and I was quite surprised at the number of resources available to assist in the project. Below you will find links to the documents I found with the hope it will help you out in the near future.

Tag Archives: FCoE

Blade Server Networking Options

If you are new to blade servers, you may find there are quite a few options to consider in regards to managing your Ethernet traffic. Some vendors promote the traditional integrated switching, while others promote extending the fabric to a Top of Rack (ToR) device. Each method has its own benefits, so let me explain what those are. Before I get started, although I work for Dell, this blog post is designed to be an un-biased review of the network options available for many blade server vendors.

IBM Announces New Blade Server – HS23

IBM officially announced their new blade, the HS23 blade server, and it comes with some improvement.

Dell Announces Converged 10GbE Switch for M1000e

Updated 1/27/2011

Dell quietly announced the addition of a 10 Gigabit Ethernet (10GbE) switch module, known as the M8428-k. This blade module advertises 600 ns low-latency, wire-speed, 10GbE performance, Fibre Channel over Ethernet (FCoE) switching, and low-latency 8 Gb Fibre Channel (FC) switching and connectivity. Continue reading

IBM Announces Emulex Virtual Fabric Adapter for BladeCenter…So?

Emulex and IBM announced today the availability of a new Emulex expansion card for blade servers that allows for up to 8 virtual nics to be assigned for each physical NIC. The “Emulex Virtual Fabric Adapter for IBM BladeCenter (IBM part # 49Y4235)” is a CFF-H expansion card is based on industry-standard PCIe architecture and can operate as a “Virtual NIC Fabric Adapter” or as a dual-port 10 Gb or 1 Gb Ethernet card.

Emulex and IBM announced today the availability of a new Emulex expansion card for blade servers that allows for up to 8 virtual nics to be assigned for each physical NIC. The “Emulex Virtual Fabric Adapter for IBM BladeCenter (IBM part # 49Y4235)” is a CFF-H expansion card is based on industry-standard PCIe architecture and can operate as a “Virtual NIC Fabric Adapter” or as a dual-port 10 Gb or 1 Gb Ethernet card.

When operating as a Virtual NIC (vNIC) each of the 2 physical ports appear to the blade server as 4 virtual NICs for a total of 8 virtual NICs per card. According to IBM, the default bandwidth for each vNIC is 2.5 Gbps. The cool feature about this mode is that the bandwidth for each vNIC can be configured from 100 Mbps to 10 Gbps, up to a maximum of 10 Gb per virtual port. The one catch with this mode is that it ONLY operates with the BNT Virtual Fabric 10Gb Switch Module, which provides independent control for each vNIC. This means no connection to Cisco Nexus…yet. According to Emulex, firmware updates coming later (Q1 2010??) will allow for this adapter to be able to handle FCoE and iSCSI as a feature upgrade. Not sure if that means compatibility with Cisco Nexus 5000 or not. We’ll have to wait and see.

When used as a normal Ethernet Adapter (10Gb or 1Gb), aka “pNIC mode“, the card can is viewed as a standard 10 Gbps or 1 Gbps 2-port Ethernet expansion card. The big difference here is that it will work with any available 10 Gb switch or 10 Gb pass-thru module installed in I/O module bays 7 and 9.

So What?

I’ve known about this adapter since VMworld, but I haven’t blogged about it because I just don’t see a lot of value. HP has had this functionality for over a year now in their VirtualConnect Flex-10 offering so this technology is nothing new. Yes, it would be nice to set up a NIC in VMware ESX that only uses 200MB of a pipe, but what’s the difference in having a fake NIC that “thinks” he’s only able to use 200MB vs a big fat 10Gb pipe for all of your I/O traffic. I’m just not sure, but am open to any comments or thoughts.

legalization of cannabis

beth moore blog

charcoal grill

dell coupon code

cervical cancer symptoms

REVEALED: IBM's Nexus 4000 Switch: 4001I (Updated)

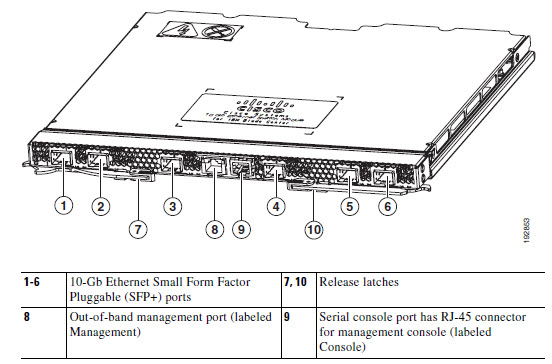

Finally – information on the soon-to-be-released Cisco Nexus 4000 switch for IBM BladeCenter. Apparently IBM is officially calling their version “Cisco Nexus Switch Module 4001I for the IBM BladeCenter.” I’m not sure if it’s “officially” announced yet, but I’ve uncovered some details. Here is a summary of the Cisco Nexus Switch Module 4001I for the IBM BladeCenter:

- Six external 10-Gb Ethernet ports for uplink

- 14 internal XAUI ports for connection to the server blades in the chassis

- One 10/100/1000BASE-T RJ-45 copper management port for out-of-band management link (this port is available on the front panel next to the console port)

- One external RS-232 serial console port (this port is available on the front panel and uses an RJ-45 connector)

More tidbits of info:

- The switch will be capable of forwarding Ethernet and FCoE packets at wire rate speed.

- The six external ports will be SFP+ (no surprise) and they’ll support 10GBASE-SR SFP+, 10GBASE-LR SFP+, 10GBASE-CU SFP+ and GE-SFP.

- Internal port speeds can run at 1 Gb or 10Gb (and can be set to auto-negotiate); full duplex

- Internal ports will be able to forward Layer-2 packets at wire rate speed.

- The switch will work in the IBM BladeCenter “high-speed bays” (bays 7, 8, 9 and 10); however at this time, the available Converged Network Adapters (CNAs) for the IBM blade servers will only work with Nexus 4001I’s located in bays 7 and 9.

There is also mention of a “Nexus 4005I” from IBM, but I can’t find anything on that. I do not believe that IBM has announced this product, so the information provided is based on documentation from Cisco’s web site. I expect announcement to come in the next 2 weeks, though, with availability probably following in November just in time for the Christmas rush!

For details on the information mentioned above, please visit the Cisco web site, titled “Cisco Nexus 4001I and 4005I Switch Module for IBM BladeCenter Hardware Installation Guide“.

If you are interested in finding out more about configuring the NX-OS for the Cisco Nexus Switch Module 4001I for the IBM BladeCenter, check out the Cisco Nexus 4001I and 4005I Switch Module for IBM BladeCenter NX-OS Configuration Guide

UPDATE (10/20/09): the IBM part # for the Cisco Nexus 4001I Switch Module will be 46M6071.

UPDATE # 2 (10/20/09, 17:37 PM EST): Found more Cisco links:

Cisco Nexus 4001I Switch Module At A Glance

Cisco Nexus 4001I Switch Module DATA SHEET

New Picture:

black swan movie

hot shot business

ibooks for mac

bonita springs florida

greenville daily news