In 1965, Gordon Moore predicted that engineers would be able to double the number of components on a microchip every two years. Known as Moore’s law, his prediction has come true – processors are continuing to become faster each year while the components are becoming smaller and smaller. In the footprint of the original ENIAC computer, we can today fit thousands of CPUs that offer a trillion more computes per seconds at a fraction of the cost. This continued trend is allowing server manufactures to shrink the footprint of the typical x86 blade server allowing more I/O expansion, more CPUs and more memory. Will this continued trend allow blade servers to gain market share, or could it possibly be the end of rack servers? My vision of the next generation data center could answer that question.

Tag Archives: cna

Dell Network Daughter Card (NDC) and Network Partitioning (NPAR) Explained

If you are a reader of BladesMadeSimple, you are no stranger to Dell’s Network Daughter Card (NDC), but if it is a new term for you, let me give you the basics. Up until now, blade servers came with network interface cards (NICs) pre-installed as part of the motherboard. Most servers came standard with Dual-port 1Gb Ethernet NICs on the motherboard, so if you invested into a 10Gb Ethernet (10GbE) or other converged technologies, the onboard NICs were stuck at 1Gb Ethernet. As technology advanced and 10Gb Ethernet became more prevalent in the data center, blade servers entered the market with 10GbE standard on the motherboard. If, however, you weren’t implementing 10GbE then you found yourself paying for technology that you couldn’t use. Basically, what ever came standard on the motherboard is what you were stuck with – until now.

Why Are Dell’s Blade Servers “Different”?

I’ve learned over the years that it is very easy to focus on the feeds and speeds of a server while overlooking features that truly differentiate. When you take a look under the covers, a server’s CPU and memory are going to be equal to the competition, so the innovation that goes into the server is where the focus should be. On Dell’s community blog, Rob Bradfield, a Senior Blade Server Product Line Consultant in Dell’s Enterprise Product Group, discusses some of the innovation and reliability that goes into Dell blade servers. I encourage you to take a look at Rob’s blog post at http://dell.to/mXE7iJ. Continue reading

Dell Announces Blade Refresh and NIC Partitioning (NPAR)

Dell announced today a refresh of the PowerEdge M910 blade server based on the Intel Xeon E7 processor. The M910 is a full-height blade that can hold 512GB of RAM across 32 DIMMs. The refreshed M910 blade server will also feature Dell’s FlexMem bridge that enables users to use all 32 DIMM slots with only 2 CPUs. You can read more about the M910 blade server in an earlier blog post of mine here.

According to the Dell press release issued today Continue reading

Dell Announces Converged 10GbE Switch for M1000e

Updated 1/27/2011

Dell quietly announced the addition of a 10 Gigabit Ethernet (10GbE) switch module, known as the M8428-k. This blade module advertises 600 ns low-latency, wire-speed, 10GbE performance, Fibre Channel over Ethernet (FCoE) switching, and low-latency 8 Gb Fibre Channel (FC) switching and connectivity. Continue reading

More HP and IBM Blade Rumours

I wanted to post a few more rumours before I head out to HP in Houston for “HP Blades and Infrastructure Software Tech Day 2010” so it’s not to appear that I got the info from HP. NOTE: this is purely speculation, I have no definitive information from HP so this may be false info.

First off – the HP Rumour:

I’ve caught wind of a secret that may be truth, may be fiction, but I hope to find out for sure from the HP blade team in Houston. The rumour is that HP’s development team currently has a Cisco Nexus Blade Switch Module for the HP BladeSystem in their lab, and they are currently testing it out.

Now, this seems far fetched, especially with the news of Cisco severing partner ties with HP, however, it seems that news tidbit was talking only about products sold with the HP label, but made by Cisco (OEM.) HP will continue to sell Cisco Catalyst switches for the HP BladeSystem and even Cisco branded Nexus switches with HP part numbers (see this HP site for details.) I have some doubt about this rumour of a Cisco Nexus Switch that would go inside the HP BladeSystem simply because I am 99% sure that HP is announcing a Flex10 type of BladeSystem switch that will allow converged traffic to be split out, with the Ethernet traffic going to the Ethernet fabric and the Fibre traffic going to the Fibre fabric (check out this rumour blog I posted a few days ago for details.) Guess only time will tell.

The IBM Rumour:

I posted a few days ago a rumour blog that discusses the rumour of HP’s next generation adding Converged Network Adapters (CNA) to the motherboard on the blades (in lieu of the 1GB or Flex10 NICs), well, now I’ve uncovered a rumour that IBM is planning on following later this year with blades that will also have CNA’s on the motherboard. This is huge! Let me explain why.

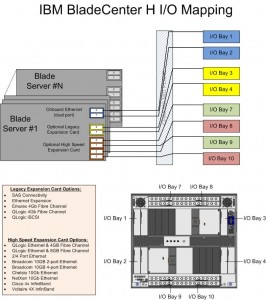

The design of IBM’s BladeCenter E and BladeCenter H have the 1Gb NICs onboard each blade server hard-wired to I/O Bays 1 and 2 – meaning only Ethernet modules can be used in these bays (see the image to the left for details.) However, I/O Bays 1 and 2 are for “standard form factor I/O modules” while I/O Bays are for “high speed form factor I/O modules”. This means that I/O Bays 1 and 2 can not handle “high speed” traffic, i.e. converged traffic.

The design of IBM’s BladeCenter E and BladeCenter H have the 1Gb NICs onboard each blade server hard-wired to I/O Bays 1 and 2 – meaning only Ethernet modules can be used in these bays (see the image to the left for details.) However, I/O Bays 1 and 2 are for “standard form factor I/O modules” while I/O Bays are for “high speed form factor I/O modules”. This means that I/O Bays 1 and 2 can not handle “high speed” traffic, i.e. converged traffic.

This means that IF IBM comes out with a blade server that has a CNA on the motherboard, either:

a) the blade’s CNA will have to route to I/O Bays 7-10

OR

b) IBM’s going to have to come out with a new BladeCenter chassis that allows the high speed converged traffic from the CNAs to connect to a high speed switch module in Bays 1 and 2.

So let’s think about this. If IBM (and HP for that matter) does put CNA’s on the motherboard, is there a need for additional mezzanine/daughter cards? This means the blade servers could have more real estate for memory, or more processors. If there’s no extra daughter cards, then there’s no need for additional I/O module bays. This means the blade chassis could be smaller and use less power – something every customer would like to have.

I can really see the blade market moving toward this type of design (not surprising very similar to Cisco’s UCS design) – one where only a pair of redundant “modules” are needed to split converged traffic to their respective fabrics. Maybe it’s all a pipe dream, but when it comes true in 18 months, you can say you heard it here first.

Thanks for reading. Let me know your thoughts – leave your comments below.

HP BladeSystem Rumours

I’ve recently posted some rumours about IBM’s upcoming announcements in their blade server line, now it is time to let you know some rumours I’m hearing about HP. NOTE: this is purely speculation, I have no definitive information from HP so this may be false info. That being said – here we go:

Rumour #1: Integration of “CNA” like devices on the motherboard.

As you may be aware, with the introduction of the “G6”, or Generation 6, of HP’s blade servers, HP added “FlexNICs” onto the servers’ motherboards instead of the 2 x 1Gb NICs that are standard on most of the competition’s blades. FlexNICs allow for the user to carve up a 10Gb NIC into 4 virtual NICs when using the Flex-10 Modules inside the chassis. (For a detailed description of Flex-10 technology, check out this HP video.) The idea behind Flex-10 is that you have 10Gb connectivity that allows you to do more with fewer NICs.

SO – what’s next? Rumour has it that the “G7” servers, expected to be announced on March 16, will have an integrated CNA or Converged Network Adapter. With a CNA on the motherboard, both the ethernet and the fibre traffic will have a single integrated device to travel over. This is a VERY cool idea because this announcement could lead to a blade server that can eliminate the additional daughter card or mezzanine expansion slots therefore freeing up valueable real estate for newer Intel CPU architecture.

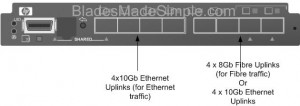

Rumour #2: Next generation Flex-10 Modules will separate Fibre and Network traffic.

Today, HP’s Flex-10 ONLY allows handles Ethernet traffic. There is no support for FCoE (Fibre Channel over Ethernet) so if you have a Fibre network, then you’ll also have to add a Fibre Switch into your BladeSystem chassis design. If HP does put in a CNA onto their next generation blade servers that carry Fibre and Ethernet traffic, wouldn’t it make sense there would need to be a module that would fit in the BladeSystem chassis that would allow for the storage and Ethernet traffic to exit?

I’m hearing that a new version of the Flex-10 Module is coming, very soon, that will allow for the Ethernet AND the Fibre traffic to exit out the switch. (The image to the right shows what it could look like.) The switch would allow for 4 of the uplink ports to go to the Ethernet fabric and the other 4 ports of the 8 port Next Generation Flex-10 switch to either be dedicated to a Fibre fabric OR used for additional 4 ports to the Ethernet fabric.

If this rumour is accurate, it could shake up things in the blade server world. Cisco UCS uses 10Gb Data Center Ethernet (Ethernet plus FCoE); IBM BladeCenter has the ability to do a 10Gb plus Fibre switch fabric (like HP) or it can use a 10Gb Enhanced Ethernet plus FCoE (like Cisco) however no one currently has a device to split the Ethernet and Fibre traffic at the blade chassis. If this rumour is true, then we should see it announced around the same time as the G7 blade server (March 16).

That’s all for now. As I come across more rumours, or information about new announcements, I’ll let you know.

Cisco, EMC and VMware Announcement – My Thoughts

By now I’m sure you’ve read, heard or seen Tweeted the announcement that Cisco, EMC and VMware have come together and created the Virtual Computing Environment coalition . So what does this announcement really mean? Here are my thoughts:

Greater Cooperation and Compatibility

Since these 3 top IT giants are working together, I expect to see greater cooperation between all three vendors, which will lead to understanding between what each vendor is offering. More important, though, is we’ll be able to have reference architecturethat can be a starting point to designing a robust datacenter. This will help to validate that an “optimized datacenter” is a solution that every customer should consider.

Technology Validation

With the introduction of the Xeon 5500 processor from Intel earlier this year and the announcement of the Nehalem EX coming early in Q1 2010, the ability to add more and more virtual machines onto a single host server is becoming more prevalent. No longer is the processor or memory the bottleneck – now it’s the I/O. With the introduction of Converged Network Adapters (CNAs), servers now have access to Converged Enhanced Ethernet (CEE) or DataCenter Ethernet (DCE) providing up to 10Gb of bandwidth running at 80% efficiency with lossless packets. With this lossless ethernet, I/O is no longer the bottleneck.

VMware offers the top selling virtualization software, so it makes sense they would be a good fit for this solution.

Cisco has a Unified Computing System that offers up the ability to combine a server running a CNA to a Interconnect switch that allows the data to be split out into ethernet and storage traffic. It also has a building block design to allow for ease of adding new servers – a key messaging in the Coalition announcement.

EMCoffers a storage platform that will enable the storage traffic from the Cisco UCS 6120XP Interconnect Switch and they have a vested interest in VMware and Cisco, so this marriage of the 3 top IT vendors is a great fit.

Announcement of Vblock™ Infrastructure Packages

According to the announcement, the Vblock Infrastructure Package “will provide customers with a fundamentally better approach to streamlining and optimizing IT strategies around private clouds.” The packages will be fully integrated, tested, validated, and that combine best-in-class virtualization, networking, computing, storage, security, and management technologies from Cisco, EMC and VMware with end-to-end vendor accountability. My thought on these packages is that they are really nothing new. Cisco’s UCS has been around, VMware vSphere has been around and EMC’s storage has been around. The biggest message from this announcement is that there will soon be “bundles” that will simplify customers solutions. Will that take away from Solution Providers’ abilities to implement unique solutions? I don’t think so. Although this new announcement does not provide any new product, it does mark the beginning of an interesting relationship between 3 top IT giants and I think this announcement will definitely be an industry change – it will be interesting to see what follows.

UPDATE – click here check out a 3D model of the vBlocks Architecture.

IBM BladeCenter HS22 Delivers Best SPECweb2005 Score Ever Achieved by a Blade Server

According to IBM’s System x and BladeCenter x86 Server Blog, the IBM BladeCenter HS22 server has posted the best SPECweb2005 score ever from a blade server. With a SPECweb2005 supermetric score of 75,155, IBM has reached a benchmark seen by no other blade yet to-date. The SPECweb2005 benchmark is designed to be a neutral, equal benchmark for evaluting the peformance of web servers. According to the IBM blog, the score is derived from three different workloads measured:

According to IBM’s System x and BladeCenter x86 Server Blog, the IBM BladeCenter HS22 server has posted the best SPECweb2005 score ever from a blade server. With a SPECweb2005 supermetric score of 75,155, IBM has reached a benchmark seen by no other blade yet to-date. The SPECweb2005 benchmark is designed to be a neutral, equal benchmark for evaluting the peformance of web servers. According to the IBM blog, the score is derived from three different workloads measured:

- SPECweb2005_Banking – 109,200 simultaneous sessions

- SPECweb2005_Ecommerce – 134,472 simultaneous sessions

- SPECweb2005_Support – 64,064 simultaneous sessions

The HS22 achieved these results using two Quad-Core Intel Xeon Processor X5570 (2.93GHz with 256KB L2 cache per core and 8MB L3 cache per processor—2 processors/8 cores/8 threads). The HS22 was also configured with 96GB of memory, the Red Hat Enterprise Linux® 5.4 operating system, IBM J9 Java® Virtual Machine, 64-bit Accoria Rock Web Server 1.4.9 (x86_64) HTTPS software, and Accoria Rock JSP/Servlet Container 1.3.2 (x86_64).

It’s important to note that these results have not yet been “approved” by SPEC, the group who posts the results, but as soon as they are, they’ll be published at at http://www.spec.org/osg/web2005

The IBM HS22 is IBM’s most popular blade server with the following specs:

- up to 2 x Intel 5500 Processors

- 12 memory slots for a current maximum of 96Gb of RAM

- 2 hot swap hard drive slots capable of running RAID 1 (SAS or SATA)

- 2 PCI Express connectors for I/O expansion cards (NICs, Fibre HBAs, 10Gb Ethernet, CNA, etc)

- Internal USB slot for running VMware ESXi

- Remote management

- Redundant connectivity

(UPDATED) Officially Announced: IBM’s Nexus 4000 Switch: 4001I (PART 2)

I’ve gotten a lot of response from my first post, “REVEALED: IBM’s Nexus 4000 Switch: 4001I” and more information is coming out quickly so I decided to post a part 2. IBM officially announced the switch on October 20, 2009, so here’s some additional information:

- The Nexus 4001I Switch for the IBM BladeCenter is part # 46M6071 and has a list price of $12,999 (U.S.) each

- In order for the Nexus 4001I switch for the IBM BladeCenter to connect to an upstream FCoE switch, an additional software purchase is required. This item will be part # strong>49Y9983, “Software Upgrade License for Cisco Nexus 4001I.” This license upgrade allows for the Nexus 4001I to handle FCoE traffic. It has a U.S. list price of $3,899

- The Cisco Nexus 4001I for the IBM BladeCenter will be compatible with the following blade server expansion cards

- 2/4 Port Ethernet Expansion Card, part # 44W4479

- NetXen 10Gb Ethernet Expansion Card, part # 39Y9271

- Broadcom 2-port 10Gb Ethernet Exp. Card, part # 44W4466

- Broadcom 4-port 10Gb Ethernet Exp. Card, part # 44W4465

- Broadcom 10 Gb Gen 2 2-port Ethernet Exp. Card, part # 46M6168

- Broadcom 10 Gb Gen 2 4-port Ethernet Exp. Card, part # 46M6164

- QLogic 2-port 10Gb Converged Network Adapter, part # 42C1830

- (UPDATED 10/22/09) The newly announced Emulex Virtual Adapter WILL NOT work with the Nexus 4001I IN VIRTUAL NIC (vNIC) mode. It will work in pNIC mode according to IBM.

The Cisco Nexus 4001I switch for the IBM BladeCenter is a new approach to getting converged network traffic. As I posted a few weeks ago in my post, “How IBM’s BladeCenter works with  Cisco Nexus 5000” before the Nexus 4001I was announced, in order to get your blade servers to communicate with a Cisco Nexus 5000, you had to use a CNA,and a 10Gb Pass-Thru Module as shown on the left. The pass-thru module used in that solution requires for a direct connection to be made from the pass-thru module to the Cisco Nexus 5000 for every blade server that requires connectivity. This means for 14 blade servers, 14 connections are required to the Cisco Nexus 5000. This solution definitely works – it just eats up 14 Nexus 5000 ports. At $4,999 list (U.S.), plus the cost of the GBICs, the “pass-thru” scenario may be a good solution for budget conscious environments.

Cisco Nexus 5000” before the Nexus 4001I was announced, in order to get your blade servers to communicate with a Cisco Nexus 5000, you had to use a CNA,and a 10Gb Pass-Thru Module as shown on the left. The pass-thru module used in that solution requires for a direct connection to be made from the pass-thru module to the Cisco Nexus 5000 for every blade server that requires connectivity. This means for 14 blade servers, 14 connections are required to the Cisco Nexus 5000. This solution definitely works – it just eats up 14 Nexus 5000 ports. At $4,999 list (U.S.), plus the cost of the GBICs, the “pass-thru” scenario may be a good solution for budget conscious environments.

In comparison, with the IBM Nexus 4001I switch, we now can have as few as 1 uplink to the Cisco Nexus 5000 from the Nexus 4001I switch. This allows you to have more open ports on the Cisco Nexus 5000 for connections to other IBM Bladecenters with Nexus 4001I switches, or to allow connectivity from your rack based servers with CNAs.

Bottom line: the Cisco Nexus 4001I switch will reduce your port requirements on your Cisco Nexus 5000 or Nexus 7000 switch by allowing up to 14 servers to uplink via 1 port on the Nexus 4001I.

For more details on the IBM Nexus 4001I switch, I encourage you to go to the newly released IBM Redbook for the Nexus 4001I Switch.